The OpenMP specification says that the outermost loop's iteration variable (in this case int i) is implicitly private, but otherwise any previously declared variables (in this case j and k) are shared. As j and k are actually used as loop iteration variables inside the parallel region, making them shared causes the threads to piss all over each other's shit (I believe that's the technical term used in the OpenMP spec).

Program terminated with signal SIGSEGV, Segmentation fault.

#0 0x000055e47e85a544 in gemm_tn (M=<optimized out>, N=<optimized out>, K=<optimized out>, ALPHA=<optimized out>, A=<optimized out>, lda=<optimized out>, B=<optimized out>, ldb=0, C=0x0,

ldc=0) at ./src/gemm.c:126

126 C[i*ldc+j] += A_PART*B[k*ldb+j];

[Current thread is 1 (Thread 0x7f1ee3050700 (LWP 20304))]

(gdb) thread apply all bt full

Thread 4 (Thread 0x7f1ee3851700 (LWP 20303)):

#0 0x000055e47e85a544 in gemm_tn (M=<optimized out>, N=<optimized out>, K=<optimized out>, ALPHA=<optimized out>, A=<optimized out>, lda=<optimized out>, B=<optimized out>, ldb=0, C=0x0,

ldc=0) at ./src/gemm.c:126

A_PART = -0.0224672705

i = 578

j = 32767

k = -2140035008

#1 0x00007f1f02fc68be in gomp_thread_start (xdata=<optimized out>) at ../../../src/libgomp/team.c:120

team = 0x55e480717770

task = 0x55e480717e50

data = <optimized out>

pool = <optimized out>

local_fn = 0x55e47e85a453 <gemm_tn._omp_fn.2>

local_data = 0x7fff35fac540

#2 0x00007f1f02d9a494 in start_thread (arg=0x7f1ee3851700) at pthread_create.c:333

__res = <optimized out>

pd = 0x7f1ee3851700

now = <optimized out>

unwind_buf = {cancel_jmp_buf = {{jmp_buf = {139770642896640, 1786075862227522468, 0, 140734099015583, 0, 139771178414144, -1804626774660181084, -1803850822966930524},

mask_was_saved = 0}}, priv = {pad = {0x0, 0x0, 0x0, 0x0}, data = {prev = 0x0, cleanup = 0x0, canceltype = 0}}}

not_first_call = <optimized out>

pagesize_m1 = <optimized out>

sp = <optimized out>

freesize = <optimized out>

__PRETTY_FUNCTION__ = "start_thread"

#3 0x00007f1f02adca8f in clone () at ../sysdeps/unix/sysv/linux/x86_64/clone.S:97

No locals.

Thread 3 (Thread 0x7f1f036d4500 (LWP 20289)):

#0 0x000055e47e85a53d in gemm_tn (M=<optimized out>, N=<optimized out>, K=<optimized out>, ALPHA=<optimized out>, A=<optimized out>, lda=<optimized out>, B=<optimized out>, ldb=1152,

C=0x7f1eeced8010, ldc=1776) at ./src/gemm.c:126

A_PART = 0.0450726002

i = 2

j = 0

k = 1776

#1 0x00007f1f02fbda9f in GOMP_parallel (fn=0x55e47e85a453 <gemm_tn._omp_fn.2>, data=0x7fff35fac540, num_threads=4, flags=0) at ../../../src/libgomp/parallel.c:168

No locals.

#2 0x000055e47e85a8de in gemm_tn (M=M@entry=1152, N=N@entry=1776, K=<optimized out>, ALPHA=<optimized out>, A=<optimized out>, lda=lda@entry=1152, B=B@entry=0x7f1eef738010, ldb=1776,

C=0x7f1eeced8010, ldc=1776) at ./src/gemm.c:121

j = 799

k = 0

#3 0x000055e47e85aa3d in gemm_cpu (TA=TA@entry=1, TB=TB@entry=0, M=M@entry=1152, N=N@entry=1776, K=K@entry=128, ALPHA=ALPHA@entry=1, A=A@entry=0x7f1f0358b010, lda=1152, B=0x7f1eef738010,

ldb=1776, BETA=BETA@entry=0, C=0x7f1eeced8010, ldc=1776) at ./src/gemm.c:167

i = <optimized out>

j = <optimized out>

#4 0x000055e47e85abf5 in gemm (TA=TA@entry=1, TB=TB@entry=0, M=M@entry=1152, N=N@entry=1776, K=K@entry=128, ALPHA=ALPHA@entry=1, A=A@entry=0x7f1f0358b010, lda=1152, B=0x7f1eef738010,

ldb=1776, BETA=BETA@entry=0, C=0x7f1eeced8010, ldc=1776) at ./src/gemm.c:77

No locals.

#5 0x000055e47e82af86 in backward_convolutional_layer (l=..., net=...) at ./src/convolutional_layer.c:510

a = 0x7f1f0358b010

b = 0x7f1eef738010

c = 0x7f1eeced8010

im = 0x7f1ef05ab010

i = 0

m = 128

n = 1152

k = 1776

#6 0x000055e47e845650 in backward_network (net=...) at ./src/network.c:261

l = {type = CONVOLUTIONAL, activation = LEAKY, cost_type = SSE, forward = 0x55e47e82a85b <forward_convolutional_layer>, backward = 0x55e47e82ac9c <backward_convolutional_layer>,

update = 0x55e47e829d5e <update_convolutional_layer>, forward_gpu = 0x0, backward_gpu = 0x0, update_gpu = 0x0, batch_normalize = 0, shortcut = 0, batch = 1, forced = 0,

flipped = 0, inputs = 227328, outputs = 227328, nweights = 147456, nbiases = 128, extra = 0, truths = 0, h = 48, w = 37, c = 128, out_h = 48, out_w = 37, out_c = 128, n = 128,

max_boxes = 0, groups = 0, size = 3, side = 0, stride = 1, reverse = 0, flatten = 0, spatial = 0, pad = 1, sqrt = 0, flip = 0, index = 0, binary = 0, xnor = 0, steps = 0,

hidden = 0, truth = 0, smooth = 0, dot = 0, angle = 0, jitter = 0, saturation = 0, exposure = 0, shift = 0, ratio = 0, learning_rate_scale = 1, softmax = 0, classes = 0,

coords = 0, background = 0, rescore = 0, objectness = 0, does_cost = 0, joint = 0, noadjust = 0, reorg = 0, log = 0, tanh = 0, alpha = 0, beta = 0, kappa = 0, coord_scale = 0,

object_scale = 0, noobject_scale = 0, mask_scale = 0, class_scale = 0, bias_match = 0, random = 0, thresh = 0, classfix = 0, absolute = 0, onlyforward = 0, stopbackward = 0,

dontload = 0, dontloadscales = 0, temperature = 0, probability = 0, scale = 0, cweights = 0x0, indexes = 0x0, input_layers = 0x0, input_sizes = 0x0, map = 0x0, rand = 0x0,

cost = 0x0, state = 0x0, prev_state = 0x0, forgot_state = 0x0, forgot_delta = 0x0, state_delta = 0x0, combine_cpu = 0x0, combine_delta_cpu = 0x0, concat = 0x0, concat_delta = 0x0,

binary_weights = 0x0, biases = 0x55e48071ad00, bias_updates = 0x55e4807180e0, scales = 0x0, scale_updates = 0x0, weights = 0x7f1f0358b010, weight_updates = 0x7f1f0275f010,

delta = 0x7f1eef738010, output = 0x7f1eefc09010, squared = 0x0, norms = 0x0, spatial_mean = 0x0, mean = 0x0, variance = 0x0, mean_delta = 0x0, variance_delta = 0x0,

rolling_mean = 0x0, rolling_variance = 0x0, x = 0x0, x_norm = 0x0, m = 0x0, v = 0x0, bias_m = 0x0, bias_v = 0x0, scale_m = 0x0, scale_v = 0x0, z_cpu = 0x0, r_cpu = 0x0,

h_cpu = 0x0, prev_state_cpu = 0x0, temp_cpu = 0x0, temp2_cpu = 0x0, temp3_cpu = 0x0, dh_cpu = 0x0, hh_cpu = 0x0, prev_cell_cpu = 0x0, cell_cpu = 0x0, f_cpu = 0x0, i_cpu = 0x0,

g_cpu = 0x0, o_cpu = 0x0, c_cpu = 0x0, dc_cpu = 0x0, binary_input = 0x0, input_layer = 0x0, self_layer = 0x0, output_layer = 0x0, reset_layer = 0x0, update_layer = 0x0,

state_layer = 0x0, input_gate_layer = 0x0, state_gate_layer = 0x0, input_save_layer = 0x0, state_save_layer = 0x0, input_state_layer = 0x0, state_state_layer = 0x0,

input_z_layer = 0x0, state_z_layer = 0x0, input_r_layer = 0x0, state_r_layer = 0x0, input_h_layer = 0x0, state_h_layer = 0x0, wz = 0x0, uz = 0x0, wr = 0x0, ur = 0x0, wh = 0x0,

uh = 0x0, uo = 0x0, wo = 0x0, uf = 0x0, wf = 0x0, ui = 0x0, wi = 0x0, ug = 0x0, wg = 0x0, softmax_tree = 0x0, workspace_size = 8183808}

i = 7

orig = {n = 17, batch = 1, seen = 0x55e480714030, t = 0x55e480714050, epoch = 0, subdivisions = 1, layers = 0x55e4807239e0, output = 0x55e4813fbb40, policy = CONSTANT,

learning_rate = 0.00999999978, momentum = 0.899999976, decay = 0.000500000024, gamma = 0, scale = 0, power = 4, time_steps = 1, step = 0, max_batches = 0, scales = 0x0,

steps = 0x0, num_steps = 0, burn_in = 0, adam = 0, B1 = 0, B2 = 0, eps = 0, inputs = 83916, outputs = 30720, truths = 30720, notruth = 0, h = 189, w = 148, c = 3, max_crop = 20,

min_crop = 10, center = 0, angle = 0, aspect = 1, exposure = 1, saturation = 1, hue = 0, gpu_index = -1, hierarchy = 0x0, input = 0x55e481b33ba0, truth = 0x55e481505aa0,

delta = 0x55e481419b50, workspace = 0x7f1eeced8010, train = 0, index = 0, cost = 0x55e480714070}

#7 0x000055e47e824323 in optimize_picture (net=net@entry=0x7fff35fade70, orig=..., max_layer=max_layer@entry=16, scale=<optimized out>, rate=rate@entry=0.0399999991, thresh=thresh@entry=1,

norm=norm@entry=1) at ./examples/nightmare.c:70

dx = 1

dy = 5

flip = 0

crop = {w = 352, h = 448, c = 3, data = 0x55e481797b80}

im = {w = 148, h = 189, c = 3, data = 0x55e481b33ba0}

last = {type = CONVOLUTIONAL, activation = LEAKY, cost_type = SSE, forward = 0x55e47e82a85b <forward_convolutional_layer>, backward = 0x55e47e82ac9c <backward_convolutional_layer>,

update = 0x55e47e829d5e <update_convolutional_layer>, forward_gpu = 0x0, backward_gpu = 0x0, update_gpu = 0x0, batch_normalize = 0, shortcut = 0, batch = 1, forced = 0,

flipped = 0, inputs = 30720, outputs = 30720, nweights = 9437184, nbiases = 1024, extra = 0, truths = 0, h = 6, w = 5, c = 1024, out_h = 6, out_w = 5, out_c = 1024, n = 1024,

max_boxes = 0, groups = 0, size = 3, side = 0, stride = 1, reverse = 0, flatten = 0, spatial = 0, pad = 1, sqrt = 0, flip = 0, index = 0, binary = 0, xnor = 0, steps = 0,

hidden = 0, truth = 0, smooth = 0, dot = 0, angle = 0, jitter = 0, saturation = 0, exposure = 0, shift = 0, ratio = 0, learning_rate_scale = 1, softmax = 0, classes = 0,

coords = 0, background = 0, rescore = 0, objectness = 0, does_cost = 0, joint = 0, noadjust = 0, reorg = 0, log = 0, tanh = 0, alpha = 0, beta = 0, kappa = 0, coord_scale = 0,

object_scale = 0, noobject_scale = 0, mask_scale = 0, class_scale = 0, bias_match = 0, random = 0, thresh = 0, classfix = 0, absolute = 0, onlyforward = 0, stopbackward = 0,

dontload = 0, dontloadscales = 0, temperature = 0, probability = 0, scale = 0, cweights = 0x0, indexes = 0x0, input_layers = 0x0, input_sizes = 0x0, map = 0x0, rand = 0x0,

cost = 0x0, state = 0x0, prev_state = 0x0, forgot_state = 0x0, forgot_delta = 0x0, state_delta = 0x0, combine_cpu = 0x0, combine_delta_cpu = 0x0, concat = 0x0, concat_delta = 0x0,

binary_weights = 0x0, biases = 0x55e4807837d0, bias_updates = 0x55e4807847e0, scales = 0x0, scale_updates = 0x0, weights = 0x7f1efbd94010, weight_updates = 0x7f1ef9993010,

delta = 0x55e481495b50, output = 0x55e4813fbb40, squared = 0x0, norms = 0x0, spatial_mean = 0x0, mean = 0x0, variance = 0x0, mean_delta = 0x0, variance_delta = 0x0,

rolling_mean = 0x0, rolling_variance = 0x0, x = 0x0, x_norm = 0x0, m = 0x0, v = 0x0, bias_m = 0x0, bias_v = 0x0, scale_m = 0x0, scale_v = 0x0, z_cpu = 0x0, r_cpu = 0x0,

h_cpu = 0x0, prev_state_cpu = 0x0, temp_cpu = 0x0, temp2_cpu = 0x0, temp3_cpu = 0x0, dh_cpu = 0x0, hh_cpu = 0x0, prev_cell_cpu = 0x0, cell_cpu = 0x0, f_cpu = 0x0, i_cpu = 0x0,

g_cpu = 0x0, o_cpu = 0x0, c_cpu = 0x0, dc_cpu = 0x0, binary_input = 0x0, input_layer = 0x0, self_layer = 0x0, output_layer = 0x0, reset_layer = 0x0, update_layer = 0x0,

state_layer = 0x0, input_gate_layer = 0x0, state_gate_layer = 0x0, input_save_layer = 0x0, state_save_layer = 0x0, input_state_layer = 0x0, state_state_layer = 0x0,

input_z_layer = 0x0, state_z_layer = 0x0, input_r_layer = 0x0, state_r_layer = 0x0, input_h_layer = 0x0, state_h_layer = 0x0, wz = 0x0, uz = 0x0, wr = 0x0, ur = 0x0, wh = 0x0,

uh = 0x0, uo = 0x0, wo = 0x0, uf = 0x0, wf = 0x0, ui = 0x0, wi = 0x0, ug = 0x0, wg = 0x0, softmax_tree = 0x0, workspace_size = 1105920}

delta = {w = 148, h = 189, c = 3, data = 0x55e481419b50}

resized = {w = -2139878024, h = 21988, c = -2119842072, data = 0x55e481918228}

out = {w = -2118441080, h = 21988, c = -2119970712, data = 0x55e48079ef48}

#8 0x000055e47e824f3c in run_nightmare (argc=<optimized out>, argv=<optimized out>) at ./examples/nightmare.c:383

layer = 16

octave = <optimized out>

buff = "scream_jnet-conv_16_000000", '\000' <repeats 14 times>, "Ȅp\003\037\177\000\000\240\335\372\065\377\177\000\000\250Ip\003\001\000\000\000p\201p\003\037\177\000\000\220\335\372\065\377\177\000\000\335\f\200~\344U\000\000^\226\223\034\000\000\000\000\377\377\377\377\000\000\000\000\260\335\372\065\377\177\000\000\000C\240\002\037\177\000\000\200Sp\003\037\177\000\000\377\377\377\377\000\000\000\000|L\\t\000\000\000\000\220W\331\002\037\177\000\000\230Np\003\037\177\000\000\270\\\331\002\037\177\000\000\230Np\003\037\177\000\000\060\200\000\000\000\000\000\000\001\000\000\000\000\000\000\000@\341\372\065\377\177\000\000\220"...

crop = {w = 352, h = 448, c = 3, data = 0x55e481797b80}

resized = {w = 352, h = 448, c = 3, data = 0x55e481965b90}

cfg = <optimized out>

weights = <optimized out>

input = <optimized out>

range = 1

norm = 1

rounds = 30

iters = 1

octaves = 4

zoom = 1

rate = 0.0399999991

thresh = 1

rotate = 0

momentum = 0.899999976

lambda = 0.00999999978

prefix = 0x0

reconstruct = 0

smooth_size = 1

net = {n = 17, batch = 1, seen = 0x55e480714030, t = 0x55e480714050, epoch = 0, subdivisions = 1, layers = 0x55e4807239e0, output = 0x55e4813fbb40, policy = CONSTANT,

learning_rate = 0.00999999978, momentum = 0.899999976, decay = 0.000500000024, gamma = 0, scale = 0, power = 4, time_steps = 1, step = 0, max_batches = 0, scales = 0x0,

steps = 0x0, num_steps = 0, burn_in = 0, adam = 0, B1 = 0, B2 = 0, eps = 0, inputs = 83916, outputs = 30720, truths = 30720, notruth = 0, h = 189, w = 148, c = 3, max_crop = 20,

min_crop = 10, center = 0, angle = 0, aspect = 1, exposure = 1, saturation = 1, hue = 0, gpu_index = -1, hierarchy = 0x0, input = 0x55e481b33ba0, truth = 0x55e481505aa0,

delta = 0x55e481419b50, workspace = 0x7f1eeced8010, train = 0, index = 0, cost = 0x55e480714070}

cfgbase = 0x55e480714240 "jnet-conv"

imbase = 0x55e480714260 "scream"

im = {w = 352, h = 448, c = 3, data = 0x55e481965b90}

features = 0x0

update = {w = 0, h = 0, c = 55516324, data = 0x1}

e = 1

n = 0

#9 0x000055e47e8286b3 in main (argc=10, argv=0x7fff35fae0e8) at ./examples/darknet.c:484

No locals.

Thread 2 (Thread 0x7f1ee4052700 (LWP 20302)):

#0 0x000055e47e85a555 in gemm_tn (M=<optimized out>, N=<optimized out>, K=<optimized out>, ALPHA=<optimized out>, A=<optimized out>, lda=<optimized out>, B=<optimized out>, ldb=-469426448,

C=0x0, ldc=0) at ./src/gemm.c:125

A_PART = 0.0188630037

i = 291

j = 32767

k = -2140035008

#1 0x00007f1f02fc68be in gomp_thread_start (xdata=<optimized out>) at ../../../src/libgomp/team.c:120

team = 0x55e480717770

task = 0x55e480717d80

data = <optimized out>

pool = <optimized out>

local_fn = 0x55e47e85a453 <gemm_tn._omp_fn.2>

local_data = 0x7fff35fac540

#2 0x00007f1f02d9a494 in start_thread (arg=0x7f1ee4052700) at pthread_create.c:333

__res = <optimized out>

pd = 0x7f1ee4052700

now = <optimized out>

unwind_buf = {cancel_jmp_buf = {{jmp_buf = {139770651289344, 1786075862227522468, 0, 140734099015583, 0, 139771178414144, -1804638868751215708, -1803850822966930524},

mask_was_saved = 0}}, priv = {pad = {0x0, 0x0, 0x0, 0x0}, data = {prev = 0x0, cleanup = 0x0, canceltype = 0}}}

not_first_call = <optimized out>

pagesize_m1 = <optimized out>

sp = <optimized out>

freesize = <optimized out>

__PRETTY_FUNCTION__ = "start_thread"

#3 0x00007f1f02adca8f in clone () at ../sysdeps/unix/sysv/linux/x86_64/clone.S:97

No locals.

Thread 1 (Thread 0x7f1ee3050700 (LWP 20304)):

#0 0x000055e47e85a544 in gemm_tn (M=<optimized out>, N=<optimized out>, K=<optimized out>, ALPHA=<optimized out>, A=<optimized out>, lda=<optimized out>, B=<optimized out>, ldb=0, C=0x0,

ldc=0) at ./src/gemm.c:126

A_PART = -0.0280113202

i = 865

j = 32767

k = -2140035008

#1 0x00007f1f02fc68be in gomp_thread_start (xdata=<optimized out>) at ../../../src/libgomp/team.c:120

team = 0x55e480717770

task = 0x55e480717f20

data = <optimized out>

pool = <optimized out>

local_fn = 0x55e47e85a453 <gemm_tn._omp_fn.2>

local_data = 0x7fff35fac540

#2 0x00007f1f02d9a494 in start_thread (arg=0x7f1ee3050700) at pthread_create.c:333

__res = <optimized out>

pd = 0x7f1ee3050700

now = <optimized out>

unwind_buf = {cancel_jmp_buf = {{jmp_buf = {139770634503936, 1786075862227522468, 0, 140734099015583, 0, 139771178414144, -1804627879003647068, -1803850822966930524},

mask_was_saved = 0}}, priv = {pad = {0x0, 0x0, 0x0, 0x0}, data = {prev = 0x0, cleanup = 0x0, canceltype = 0}}}

not_first_call = <optimized out>

pagesize_m1 = <optimized out>

sp = <optimized out>

freesize = <optimized out>

__PRETTY_FUNCTION__ = "start_thread"

#3 0x00007f1f02adca8f in clone () at ../sysdeps/unix/sysv/linux/x86_64/clone.S:97

No locals.

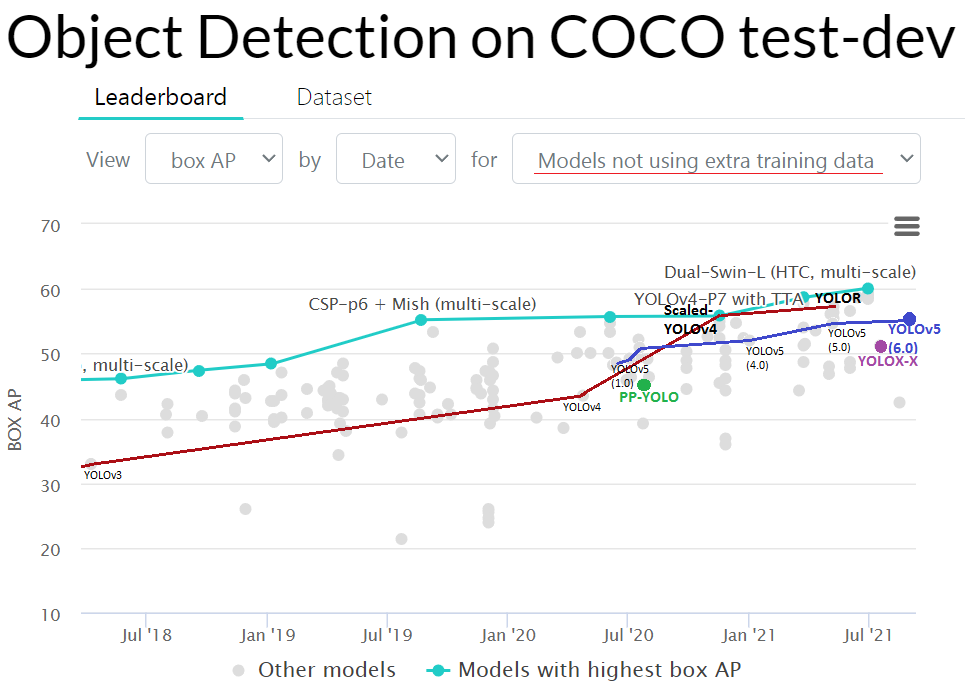

https://paperswithcode.com/sota/object-detection-on-coco

https://paperswithcode.com/sota/object-detection-on-coco AP50:95 - FPS (Tesla V100) Paper: https://arxiv.org/abs/2011.08036

AP50:95 - FPS (Tesla V100) Paper: https://arxiv.org/abs/2011.08036