Traceback (most recent call last):

File "examples/legacy/image_classification_accelerate.py", line 217, in <module>

main(argparser.parse_args())

File "examples/legacy/image_classification_accelerate.py", line 198, in main

train(teacher_model, student_model, dataset_dict, ckpt_file_path, device, device_ids, distributed, config, args, accelerator)

File "examples/legacy/image_classification_accelerate.py", line 129, in train

train_one_epoch(training_box, device, epoch, log_freq)

File "examples/legacy/image_classification_accelerate.py", line 71, in train_one_epoch

training_box.update_params(loss)

File "/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/torchdistill/core/distillation.py", line 316, in update_params

self.optimizer.step()

File "/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/accelerate/optimizer.py", line 133, in step

self.scaler.step(self.optimizer, closure)

File "/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/torch/cuda/amp/grad_scaler.py", line 339, in step

assert len(optimizer_state["found_inf_per_device"]) > 0, "No inf checks were recorded for this optimizer."

AssertionError: No inf checks were recorded for this optimizer.

datasets:

ilsvrc2012:

name: &dataset_name 'ilsvrc2012'

type: 'ImageFolder'

root: &root_dir !join ['/workspace/sync/imagenet-1k']

splits:

train:

dataset_id: &imagenet_train !join [*dataset_name, '/train']

params:

root: !join [*root_dir, '/train']

transform_params:

- type: 'RandomResizedCrop'

params:

size: &input_size [224, 224]

- type: 'RandomHorizontalFlip'

params:

p: 0.5

- &totensor

type: 'ToTensor'

params:

- &normalize

type: 'Normalize'

params:

mean: [0.485, 0.456, 0.406]

std: [0.229, 0.224, 0.225]

val:

dataset_id: &imagenet_val !join [*dataset_name, '/val']

params:

root: !join [*root_dir, '/val']

transform_params:

- type: 'Resize'

params:

size: 256

- type: 'CenterCrop'

params:

size: *input_size

- *totensor

- *normalize

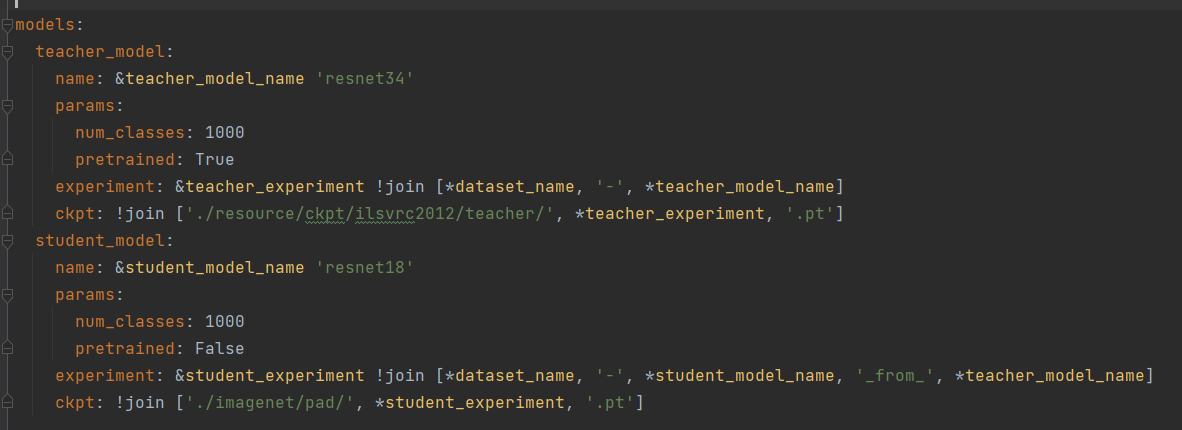

models:

teacher_model:

name: &teacher_model_name 'maskedvit_base_patch16_224'

params:

num_classes: 1000

pretrained: True

mask_ratio: 0.0

experiment: &teacher_experiment !join [*dataset_name, '-', *teacher_model_name]

ckpt: !join ['./resource/ckpt/ilsvrc2012/teacher/', *teacher_experiment, '.pt']

student_model:

name: &student_model_name 'maskedvit_base_patch16_224'

params:

num_classes: 1000

pretrained: False

mask_ratio: 0.5

experiment: &student_experiment !join [*dataset_name, '-', *student_model_name, '_from_', *teacher_model_name]

ckpt: !join ['./imagenet/mask_distillation/', *student_experiment, '.pt']

train:

log_freq: 1000

num_epochs: 100

train_data_loader:

dataset_id: *imagenet_train

random_sample: True

batch_size: 64

num_workers: 16

cache_output:

val_data_loader:

dataset_id: *imagenet_val

random_sample: False

batch_size: 128

num_workers: 16

teacher:

sequential: []

forward_hook:

input: []

output: ['mask_filter']

wrapper: 'DataParallel'

requires_grad: False

student:

adaptations:

sequential: []

frozen_modules: []

forward_hook:

input: []

output: ['mask_filter']

wrapper: 'DistributedDataParallel'

requires_grad: True

optimizer:

type: 'SGD'

grad_accum_step: 16

max_grad_norm: 5.0

module_wise_params:

- params: ['mask_token', 'cls_token', 'pos_embed']

is_teacher: None

module: None

weight_decay: 0.0

params:

lr: 0.001

momentum: 0.9

weight_decay: 0.0001

scheduler:

type: 'MultiStepLR'

params:

milestones: [30, 60, 90]

gamma: 0.1

criterion:

type: 'GeneralizedCustomLoss'

org_term:

criterion:

type: 'CrossEntropyLoss'

params:

reduction: 'mean'

factor: 1.0

sub_terms:

GenerativeKDLoss:

criterion:

type: 'GenerativeKDLoss'

params:

student_module_io: 'output'

student_module_path: 'mask_filter'

teacher_module_io: 'output'

teacher_module_path: 'mask_filter'

factor: 1.0

test:

test_data_loader:

dataset_id: *imagenet_val

random_sample: False

batch_size: 1

num_workers: 16

(pytorch_1) root@baa8ef5448b2:/workspace/sync/torchdistill# accelerate launch examples/legacy/image_classification_accelerate.py --config /workspace/sync/torchdistill/configs/legacy/official/ilsvrc2012/yoshitomo-matsubara/rrpr2020/at-vit-base_from_vit-base.yaml

2023/08/15 02:49:09 INFO __main__ Namespace(adjust_lr=False, config='/workspace/sync/torchdistill/configs/legacy/official/ilsvrc2012/yoshitomo-matsubara/rrpr2020/at-vit-base_from_vit-base.yaml', device='cuda', dist_url='env://', log=None, log_config=False, seed=None, start_epoch=0, student_only=False, test_only=False, world_size=1)

2023/08/15 02:49:09 INFO torch.distributed.distributed_c10d Added key: store_based_barrier_key:1 to store for rank: 0

2023/08/15 02:49:09 INFO __main__ Namespace(adjust_lr=False, config='/workspace/sync/torchdistill/configs/legacy/official/ilsvrc2012/yoshitomo-matsubara/rrpr2020/at-vit-base_from_vit-base.yaml', device='cuda', dist_url='env://', log=None, log_config=False, seed=None, start_epoch=0, student_only=False, test_only=False, world_size=1)

2023/08/15 02:49:09 INFO torch.distributed.distributed_c10d Added key: store_based_barrier_key:1 to store for rank: 1

2023/08/15 02:49:09 INFO __main__ Namespace(adjust_lr=False, config='/workspace/sync/torchdistill/configs/legacy/official/ilsvrc2012/yoshitomo-matsubara/rrpr2020/at-vit-base_from_vit-base.yaml', device='cuda', dist_url='env://', log=None, log_config=False, seed=None, start_epoch=0, student_only=False, test_only=False, world_size=1)

2023/08/15 02:49:09 INFO __main__ Namespace(adjust_lr=False, config='/workspace/sync/torchdistill/configs/legacy/official/ilsvrc2012/yoshitomo-matsubara/rrpr2020/at-vit-base_from_vit-base.yaml', device='cuda', dist_url='env://', log=None, log_config=False, seed=None, start_epoch=0, student_only=False, test_only=False, world_size=1)

2023/08/15 02:49:09 INFO torch.distributed.distributed_c10d Added key: store_based_barrier_key:1 to store for rank: 2

2023/08/15 02:49:09 INFO torch.distributed.distributed_c10d Added key: store_based_barrier_key:1 to store for rank: 3

2023/08/15 02:49:09 INFO torch.distributed.distributed_c10d Rank 3: Completed store-based barrier for key:store_based_barrier_key:1 with 4 nodes.

2023/08/15 02:49:09 INFO torch.distributed.distributed_c10d Rank 0: Completed store-based barrier for key:store_based_barrier_key:1 with 4 nodes.

2023/08/15 02:49:09 INFO torch.distributed.distributed_c10d Rank 2: Completed store-based barrier for key:store_based_barrier_key:1 with 4 nodes.

2023/08/15 02:49:09 INFO torch.distributed.distributed_c10d Rank 1: Completed store-based barrier for key:store_based_barrier_key:1 with 4 nodes.

2023/08/15 02:49:09 INFO __main__ Distributed environment: MULTI_GPU Backend: nccl

Num processes: 4

Process index: 0

Local process index: 0

Device: cuda:0

Mixed precision type: fp16

2023/08/15 02:49:09 INFO torchdistill.datasets.util Loading train data

2023/08/15 02:49:12 INFO torchdistill.datasets.util dataset_id `ilsvrc2012/train`: 2.874385356903076 sec

2023/08/15 02:49:12 INFO torchdistill.datasets.util Loading val data

2023/08/15 02:49:12 INFO torchdistill.datasets.util dataset_id `ilsvrc2012/val`: 0.12787175178527832 sec

2023/08/15 02:49:15 INFO timm.models._builder Loading pretrained weights from Hugging Face hub (timm/vit_base_patch16_224.augreg2_in21k_ft_in1k)

2023/08/15 02:49:16 INFO timm.models._hub [timm/vit_base_patch16_224.augreg2_in21k_ft_in1k] Safe alternative available for 'pytorch_model.bin' (as 'model.safetensors'). Loading weights using safetensors.

2023/08/15 02:49:16 INFO torchdistill.common.main_util ckpt file is not found at `./resource/ckpt/ilsvrc2012/teacher/ilsvrc2012-maskedvit_base_patch16_224.pt`

2023/08/15 02:49:18 INFO torchdistill.common.main_util ckpt file is not found at `./imagenet/mask_distillation/ilsvrc2012-maskedvit_base_patch16_224_from_maskedvit_base_patch16_224.pt`

2023/08/15 02:49:18 INFO __main__ Start training

2023/08/15 02:49:18 INFO torchdistill.models.util [teacher model]

2023/08/15 02:49:18 INFO torchdistill.models.util Using the original teacher model

2023/08/15 02:49:18 INFO torchdistill.models.util [student model]

2023/08/15 02:49:18 INFO torchdistill.models.util Using the original student model

2023/08/15 02:49:18 INFO torchdistill.core.distillation Loss = 1.0 * OrgLoss + 1.0 * GenerativeKDLoss(

(cross_entropy_loss): CrossEntropyLoss()

(SmoothL1Loss): SmoothL1Loss()

)

2023/08/15 02:49:18 INFO torchdistill.core.distillation Freezing the whole teacher model

2023/08/15 02:49:18 INFO torchdistill.common.module_util `None` of `None` could not be reached in `DataParallel`

/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/accelerate/state.py:802: FutureWarning: The `use_fp16` property is deprecated and will be removed in version 1.0 of Accelerate use `AcceleratorState.mixed_precision == 'fp16'` instead.

warnings.warn(

/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/accelerate/state.py:802: FutureWarning: The `use_fp16` property is deprecated and will be removed in version 1.0 of Accelerate use `AcceleratorState.mixed_precision == 'fp16'` instead.

warnings.warn(

/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/accelerate/state.py:802: FutureWarning: The `use_fp16` property is deprecated and will be removed in version 1.0 of Accelerate use `AcceleratorState.mixed_precision == 'fp16'` instead.

warnings.warn(

/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/accelerate/state.py:802: FutureWarning: The `use_fp16` property is deprecated and will be removed in version 1.0 of Accelerate use `AcceleratorState.mixed_precision == 'fp16'` instead.

warnings.warn(

2023/08/15 02:49:24 INFO torchdistill.misc.log Epoch: [0] [ 0/5005] eta: 8:39:24 lr: 0.001 img/s: 21.99282017795937 loss: 0.4513 (0.4513) time: 6.2267 data: 3.3162 max mem: 8400

2023/08/15 02:49:24 INFO torch.nn.parallel.distributed Reducer buckets have been rebuilt in this iteration.

2023/08/15 02:49:24 INFO torch.nn.parallel.distributed Reducer buckets have been rebuilt in this iteration.

Traceback (most recent call last):

File "examples/legacy/image_classification_accelerate.py", line 217, in <module>

Traceback (most recent call last):

File "examples/legacy/image_classification_accelerate.py", line 217, in <module>

main(argparser.parse_args())

File "examples/legacy/image_classification_accelerate.py", line 198, in main

train(teacher_model, student_model, dataset_dict, ckpt_file_path, device, device_ids, distributed, config, args, accelerator)

File "examples/legacy/image_classification_accelerate.py", line 129, in train

train_one_epoch(training_box, device, epoch, log_freq)

File "examples/legacy/image_classification_accelerate.py", line 71, in train_one_epoch

main(argparser.parse_args())

File "examples/legacy/image_classification_accelerate.py", line 198, in main

training_box.update_params(loss)

File "/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/torchdistill/core/distillation.py", line 316, in update_params

train(teacher_model, student_model, dataset_dict, ckpt_file_path, device, device_ids, distributed, config, args, accelerator)

File "examples/legacy/image_classification_accelerate.py", line 129, in train

self.optimizer.step()

train_one_epoch(training_box, device, epoch, log_freq) File "/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/accelerate/optimizer.py", line 133, in step

File "examples/legacy/image_classification_accelerate.py", line 71, in train_one_epoch

training_box.update_params(loss)

File "/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/torchdistill/core/distillation.py", line 316, in update_params

self.optimizer.step()

File "/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/accelerate/optimizer.py", line 133, in step

self.scaler.step(self.optimizer, closure)self.scaler.step(self.optimizer, closure)

File "/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/torch/cuda/amp/grad_scaler.py", line 339, in step

File "/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/torch/cuda/amp/grad_scaler.py", line 339, in step

assert len(optimizer_state["found_inf_per_device"]) > 0, "No inf checks were recorded for this optimizer."assert len(optimizer_state["found_inf_per_device"]) > 0, "No inf checks were recorded for this optimizer."

AssertionErrorAssertionError: : No inf checks were recorded for this optimizer.No inf checks were recorded for this optimizer.

Traceback (most recent call last):

File "examples/legacy/image_classification_accelerate.py", line 217, in <module>

main(argparser.parse_args())

File "examples/legacy/image_classification_accelerate.py", line 198, in main

train(teacher_model, student_model, dataset_dict, ckpt_file_path, device, device_ids, distributed, config, args, accelerator)

File "examples/legacy/image_classification_accelerate.py", line 129, in train

train_one_epoch(training_box, device, epoch, log_freq)

File "examples/legacy/image_classification_accelerate.py", line 71, in train_one_epoch

training_box.update_params(loss)

File "/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/torchdistill/core/distillation.py", line 316, in update_params

self.optimizer.step()

File "/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/accelerate/optimizer.py", line 133, in step

self.scaler.step(self.optimizer, closure)

File "/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/torch/cuda/amp/grad_scaler.py", line 339, in step

assert len(optimizer_state["found_inf_per_device"]) > 0, "No inf checks were recorded for this optimizer."

AssertionError: No inf checks were recorded for this optimizer.

Traceback (most recent call last):

File "examples/legacy/image_classification_accelerate.py", line 217, in <module>

main(argparser.parse_args())

File "examples/legacy/image_classification_accelerate.py", line 198, in main

train(teacher_model, student_model, dataset_dict, ckpt_file_path, device, device_ids, distributed, config, args, accelerator)

File "examples/legacy/image_classification_accelerate.py", line 129, in train

train_one_epoch(training_box, device, epoch, log_freq)

File "examples/legacy/image_classification_accelerate.py", line 71, in train_one_epoch

training_box.update_params(loss)

File "/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/torchdistill/core/distillation.py", line 316, in update_params

self.optimizer.step()

File "/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/accelerate/optimizer.py", line 133, in step

self.scaler.step(self.optimizer, closure)

File "/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/torch/cuda/amp/grad_scaler.py", line 339, in step

assert len(optimizer_state["found_inf_per_device"]) > 0, "No inf checks were recorded for this optimizer."

AssertionError: No inf checks were recorded for this optimizer.

ERROR:torch.distributed.elastic.multiprocessing.api:failed (exitcode: 1) local_rank: 0 (pid: 3701268) of binary: /root/miniconda3/envs/pytorch_1/bin/python

Traceback (most recent call last):

File "/root/miniconda3/envs/pytorch_1/bin/accelerate", line 8, in <module>

sys.exit(main())

File "/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/accelerate/commands/accelerate_cli.py", line 45, in main

args.func(args)

File "/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/accelerate/commands/launch.py", line 970, in launch_command

multi_gpu_launcher(args)

File "/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/accelerate/commands/launch.py", line 646, in multi_gpu_launcher

distrib_run.run(args)

File "/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/torch/distributed/run.py", line 753, in run

elastic_launch(

File "/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/torch/distributed/launcher/api.py", line 132, in __call__

return launch_agent(self._config, self._entrypoint, list(args))

File "/root/miniconda3/envs/pytorch_1/lib/python3.8/site-packages/torch/distributed/launcher/api.py", line 246, in launch_agent

raise ChildFailedError(

torch.distributed.elastic.multiprocessing.errors.ChildFailedError:

============================================================

examples/legacy/image_classification_accelerate.py FAILED

------------------------------------------------------------

Failures:

[1]:

time : 2023-08-15_02:49:37

host : baa8ef5448b2

rank : 1 (local_rank: 1)

exitcode : 1 (pid: 3701269)

error_file: <N/A>

traceback : To enable traceback see: https://pytorch.org/docs/stable/elastic/errors.html

[2]:

time : 2023-08-15_02:49:37

host : baa8ef5448b2

rank : 2 (local_rank: 2)

exitcode : 1 (pid: 3701270)

error_file: <N/A>

traceback : To enable traceback see: https://pytorch.org/docs/stable/elastic/errors.html

[3]:

time : 2023-08-15_02:49:37

host : baa8ef5448b2

rank : 3 (local_rank: 3)

exitcode : 1 (pid: 3701271)

error_file: <N/A>

traceback : To enable traceback see: https://pytorch.org/docs/stable/elastic/errors.html

------------------------------------------------------------

Root Cause (first observed failure):

[0]:

time : 2023-08-15_02:49:37

host : baa8ef5448b2

rank : 0 (local_rank: 0)

exitcode : 1 (pid: 3701268)

error_file: <N/A>

traceback : To enable traceback see: https://pytorch.org/docs/stable/elastic/errors.html