Summary of Issue

Note - Related container log error: ERROR JupyterSparkMonitorListener: Exception sending socket message:

Hello! We are running a Jupyterhub service that spawns Kubernetes pods for single user servers. We also run our jupyterhub proxy as a separate service. We've been experiencing a very persistent, but flaky, issue with the Sparkmonitor extension. When creating a spark session, I am seeing the following error AttributeError: 'ScalaMonitor' object has no attribute 'comm'. However, this does not happen consistently. I oftentimes am able to create a session where the monitor will work but then if I restart the kernel, the monitor is broken with the above error (or vice versa).

For example, on my first attempt at creating a spark session, the extension works fine. Container log:

INFO:SparkMonitorKernel:Starting Kernel Extension

INFO:SparkMonitorKernel:Socket Listening on port 59845

INFO:SparkMonitorKernel:Starting socket thread, going to accept

INFO:SparkMonitorKernel:59845

INFO:SparkMonitorKernel:Adding jar from /work/venv_core_ml_kernel/lib/python3.7/site-packages/sparkmonitor/listener_2.12.jar

[I 2021-12-21 23:45:52.892 SingleUserNotebookApp log:189] 101 GET /user/username/api/kernels/e5800417-4831-4bb4-9eec-85d7fb16b388/channels?session_id=aca66ed3-e383-4dfd-bd21-eae11e96ccb2 ([email protected]) 1877.03ms

[I 2021-12-21 23:45:53.002 SingleUserNotebookApp log:189] 101 GET /user/username/api/kernels/e5800417-4831-4bb4-9eec-85d7fb16b388/channels?session_id=26868351-57cd-43c6-b14b-c85bd1842316 ([email protected]) 2.18ms

[I 2021-12-21 23:45:53.147 SingleUserNotebookApp log:189] 101 GET /user/username/api/kernels/e5800417-4831-4bb4-9eec-85d7fb16b388/channels?session_id=0ccb7d08-e61b-44e6-a08c-57a36d2797ed ([email protected]) 2.12ms

[I 2021-12-21 23:45:55.711 SingleUserNotebookApp log:189] 200 GET /user/username/api/terminals?1640130355597 ([email protected]) 1.13ms

[I 2021-12-21 23:45:56.253 SingleUserNotebookApp log:189] 200 GET /user/username/api/sessions?1640130356141 ([email protected]) 1.09ms

[I 2021-12-21 23:45:56.254 SingleUserNotebookApp log:189] 200 GET /user/username/api/kernels?1640130356142 ([email protected]) 1.01ms

INFO:SparkMonitorKernel:SparkMonitor comm opened from frontend.

INFO:SparkMonitorKernel:SparkMonitor comm opened from frontend.

[I 2021-12-21 23:46:03.074 SingleUserNotebookApp log:189] 200 GET /user/username/api/contents?content=1&1640130360080 ([email protected]) 2874.67ms

[I 2021-12-21 23:46:05.820 SingleUserNotebookApp log:189] 200 GET /user/username/api/terminals?1640130365706 ([email protected]) 1.36ms

[I 2021-12-21 23:46:05.981 SingleUserNotebookApp log:189] 200 GET /user/username/api/kernelspecs?1640130365868 ([email protected]) 2.78ms

[I 2021-12-21 23:46:07.068 SingleUserNotebookApp log:189] 200 GET /user/username/api/sessions?1640130366954 ([email protected]) 1.18ms

[I 2021-12-21 23:46:07.070 SingleUserNotebookApp log:189] 200 GET /user/username/api/kernels?1640130366956 ([email protected]) 1.06ms

[I 2021-12-21 23:46:16.011 SingleUserNotebookApp log:189] 200 GET /user/username/api/contents?content=1&1640130373104 ([email protected]) 2795.89ms

[I 2021-12-21 23:46:16.014 SingleUserNotebookApp log:189] 200 GET /user/username/api/terminals?1640130375820 ([email protected]) 0.98ms

[I 2021-12-21 23:46:17.181 SingleUserNotebookApp log:189] 200 GET /user/username/api/sessions?1640130377069 ([email protected]) 1.22ms

[I 2021-12-21 23:46:17.182 SingleUserNotebookApp log:189] 200 GET /user/username/api/kernels?1640130377071 ([email protected]) 0.90ms

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

I1221 23:46:20.384598 242 sched.cpp:232] Version: 1.7.2

I1221 23:46:20.385978 225 sched.cpp:336] New master detected at [email protected]:5050

I1221 23:46:20.386255 225 sched.cpp:401] Authenticating with master [email protected]:5050

I1221 23:46:20.386270 225 sched.cpp:408] Using default CRAM-MD5 authenticatee

I1221 23:46:20.386425 234 authenticatee.cpp:97] Initializing client SASL

I1221 23:46:20.387233 234 authenticatee.cpp:121] Creating new client SASL connection

W1221 23:46:20.397209 241 process.cpp:838] Failed to recv on socket 437 to peer '36.72.23.53:34312': Decoder error

I1221 23:46:20.398273 229 authenticatee.cpp:213] Received SASL authentication mechanisms: CRAM-MD5

I1221 23:46:20.398306 229 authenticatee.cpp:239] Attempting to authenticate with mechanism 'CRAM-MD5'

I1221 23:46:20.398959 238 authenticatee.cpp:259] Received SASL authentication step

I1221 23:46:20.399524 239 authenticatee.cpp:299] Authentication success

I1221 23:46:20.399653 236 sched.cpp:513] Successfully authenticated with master [email protected]:5050

I1221 23:46:20.401082 230 sched.cpp:744] Framework registered with 195f7369-64e5-4bc5-a8ed-8b576994450a-11035

21/12/21 23:46:20 WARN MetricsConfig: Cannot locate configuration: tried hadoop-metrics2-s3a-file-system.properties,hadoop-metrics2.properties

INFO:SparkMonitorKernel:Client Connected ('127.0.0.1', 35478)

[I 2021-12-21 23:46:26.141 SingleUserNotebookApp log:189] 200 GET /user/username/api/terminals?1640130386026 ([email protected]) 1.00ms

[I 2021-12-21 23:46:28.410 SingleUserNotebookApp log:189] 200 GET /user/username/api/contents?content=1&1640130386068 ([email protected]) 2230.40ms

[I 2021-12-21 23:46:28.416 SingleUserNotebookApp log:189] 200 GET /user/username/api/sessions?1640130387180 ([email protected]) 3.12ms

[I 2021-12-21 23:46:28.417 SingleUserNotebookApp log:189] 200 GET /user/username/api/kernels?1640130387182 ([email protected]) 3.31ms

[I 2021-12-21 23:46:39.097 SingleUserNotebookApp log:189] 200 GET /user/username/api/contents?content=1&1640130396071 ([email protected]) 2914.93ms

[I 2021-12-21 23:46:39.100 SingleUserNotebookApp log:189] 200 GET /user/username/api/terminals?1640130396136 ([email protected]) 1.93ms

[I 2021-12-21 23:46:39.101 SingleUserNotebookApp log:189] 200 GET /user/username/api/sessions?1640130398473 ([email protected]) 2.26ms

[I 2021-12-21 23:46:39.101 SingleUserNotebookApp log:189] 200 GET /user/username/api/kernels?1640130398474 ([email protected]) 2.46ms

/source/virtualenv_run_jupyter/lib/python3.7/site-packages/jupyter_client/manager.py:358: FutureWarning: Method cleanup(connection_file=True) is deprecated, use cleanup_resources(restart=False).

FutureWarning)

[I 2021-12-21 23:46:39.217 SingleUserNotebookApp multikernelmanager:534] Kernel restarted: e5800417-4831-4bb4-9eec-85d7fb16b388

But if I restart the kernel and try again, the extension fails with AttributeError: 'ScalaMonitor' object has no attribute 'comm' (Note: it does not consistently follow this pattern of first time it works, second time it fails. Often the first session will fail and then it works after a kernel restart. Or it will fail three times in a row then work.)

INFO:SparkMonitorKernel:Client Connected ('127.0.0.1', 35472)

Exception in thread Thread-8:

Traceback (most recent call last):

File "/usr/lib/python3.7/threading.py", line 926, in _bootstrap_inner

self.run()

File "/work/venv_core_ml_kernel/lib/python3.7/site-packages/sparkmonitor/kernelextension.py", line 126, in run

self.onrecv(msg)

File "/work/venv_core_ml_kernel/lib/python3.7/site-packages/sparkmonitor/kernelextension.py", line 145, in onrecv

'msg': msg

File "/work/venv_core_ml_kernel/lib/python3.7/site-packages/sparkmonitor/kernelextension.py", line 223, in sendToFrontEnd

monitor.send(msg)

File "/work/venv_core_ml_kernel/lib/python3.7/site-packages/sparkmonitor/kernelextension.py", line 57, in send

self.comm.send(msg)

AttributeError: 'ScalaMonitor' object has no attribute 'comm'

Container log on failure:

[I 2021-12-21 23:46:39.219 SingleUserNotebookApp log:189] 200 POST /user/username/api/kernels/e5800417-4831-4bb4-9eec-85d7fb16b388/restart?1640130391290 ([email protected]) 7817.75ms

[I 2021-12-21 23:46:39.388 SingleUserNotebookApp log:189] 101 GET /user/username/api/kernels/e5800417-4831-4bb4-9eec-85d7fb16b388/channels?session_id=26868351-57cd-43c6-b14b-c85bd1842316 ([email protected]) 2.70ms

INFO:SparkMonitorKernel:Starting Kernel Extension

INFO:SparkMonitorKernel:Socket Listening on port 53939

INFO:SparkMonitorKernel:Starting socket thread, going to accept

INFO:SparkMonitorKernel:53939

INFO:SparkMonitorKernel:Adding jar from /work/venv_core_ml_kernel/lib/python3.7/site-packages/sparkmonitor/listener_2.12.jar

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

I1221 23:46:45.923640 389 sched.cpp:232] Version: 1.7.2

I1221 23:46:45.925024 378 sched.cpp:336] New master detected at [email protected]:5050

I1221 23:46:45.925308 378 sched.cpp:401] Authenticating with master [email protected]:5050

I1221 23:46:45.925323 378 sched.cpp:408] Using default CRAM-MD5 authenticatee

I1221 23:46:45.925534 382 authenticatee.cpp:97] Initializing client SASL

I1221 23:46:45.926290 382 authenticatee.cpp:121] Creating new client SASL connection

W1221 23:46:45.928249 388 process.cpp:838] Failed to recv on socket 437 to peer '36.72.23.53:37450': Decoder error

I1221 23:46:45.929303 375 authenticatee.cpp:213] Received SASL authentication mechanisms: CRAM-MD5

I1221 23:46:45.929327 375 authenticatee.cpp:239] Attempting to authenticate with mechanism 'CRAM-MD5'

I1221 23:46:45.929982 383 authenticatee.cpp:259] Received SASL authentication step

I1221 23:46:45.930640 386 authenticatee.cpp:299] Authentication success

I1221 23:46:45.930742 385 sched.cpp:513] Successfully authenticated with master [email protected]:5050

I1221 23:46:45.933359 377 sched.cpp:744] Framework registered with 195f7369-64e5-4bc5-a8ed-8b576994450a-11038

21/12/21 23:46:46 WARN MetricsConfig: Cannot locate configuration: tried hadoop-metrics2-s3a-file-system.properties,hadoop-metrics2.properties

INFO:SparkMonitorKernel:Client Connected ('127.0.0.1', 35472)

INFO:SparkMonitorKernel:SparkMonitor comm opened from frontend.

[I 2021-12-21 23:46:49.219 SingleUserNotebookApp log:189] 200 GET /user/username/api/terminals?1640130409107 ([email protected]) 1.17ms

[I 2021-12-21 23:46:49.220 SingleUserNotebookApp log:189] 200 GET /user/username/api/sessions?1640130409109 ([email protected]) 1.04ms

[I 2021-12-21 23:46:49.222 SingleUserNotebookApp log:189] 200 GET /user/username/api/kernels?1640130409111 ([email protected]) 0.99ms

21/12/21 23:46:51 ERROR JupyterSparkMonitorListener: Exception sending socket message:

java.net.SocketException: Broken pipe (Write failed)

at java.net.SocketOutputStream.socketWrite0(Native Method)

at java.net.SocketOutputStream.socketWrite(SocketOutputStream.java:111)

at java.net.SocketOutputStream.write(SocketOutputStream.java:155)

at sun.nio.cs.StreamEncoder.writeBytes(StreamEncoder.java:221)

at sun.nio.cs.StreamEncoder.implFlushBuffer(StreamEncoder.java:291)

at sun.nio.cs.StreamEncoder.implFlush(StreamEncoder.java:295)

at sun.nio.cs.StreamEncoder.flush(StreamEncoder.java:141)

at java.io.OutputStreamWriter.flush(OutputStreamWriter.java:229)

at sparkmonitor.listener.JupyterSparkMonitorListener.send(CustomListener.scala:54)

at sparkmonitor.listener.JupyterSparkMonitorListener.onExecutorAdded(CustomListener.scala:652)

at org.apache.spark.scheduler.SparkListenerBus.doPostEvent(SparkListenerBus.scala:63)

at org.apache.spark.scheduler.SparkListenerBus.doPostEvent$(SparkListenerBus.scala:28)

at org.apache.spark.scheduler.AsyncEventQueue.doPostEvent(AsyncEventQueue.scala:37)

at org.apache.spark.scheduler.AsyncEventQueue.doPostEvent(AsyncEventQueue.scala:37)

at org.apache.spark.util.ListenerBus.postToAll(ListenerBus.scala:115)

at org.apache.spark.util.ListenerBus.postToAll$(ListenerBus.scala:99)

at org.apache.spark.scheduler.AsyncEventQueue.super$postToAll(AsyncEventQueue.scala:105)

at org.apache.spark.scheduler.AsyncEventQueue.$anonfun$dispatch$1(AsyncEventQueue.scala:105)

at scala.runtime.java8.JFunction0$mcJ$sp.apply(JFunction0$mcJ$sp.java:23)

at scala.util.DynamicVariable.withValue(DynamicVariable.scala:62)

at org.apache.spark.scheduler.AsyncEventQueue.org$apache$spark$scheduler$AsyncEventQueue$$dispatch(AsyncEventQueue.scala:100)

at org.apache.spark.scheduler.AsyncEventQueue$$anon$2.$anonfun$run$1(AsyncEventQueue.scala:96)

at org.apache.spark.util.Utils$.tryOrStopSparkContext(Utils.scala:1319)

at org.apache.spark.scheduler.AsyncEventQueue$$anon$2.run(AsyncEventQueue.scala:96)

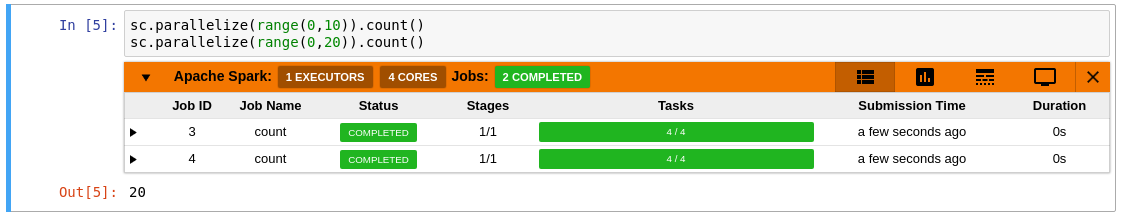

I'm experiencing this issue even with a minimal configuration such as this:

from pyspark.sql import SparkSession

spark = SparkSession.builder\

.config('spark.extraListeners', 'sparkmonitor.listener.JupyterSparkMonitorListener')\

.config('spark.driver.extraClassPath', '/source/virtualenv_run_jupyter/lib/python3.7/site-packages/sparkmonitor/listener_2.12.jar:/opt/spark/extra_jars/guava-27.0.1-jre.jar')\

.getOrCreate()

Additional Details

We are using the following Jupyter related packages/dependencies in our containers:

ipykernel==5.3.4

ipython==7.20.0

jupyter-client==6.1.5

jupyter-core==4.7.1

jupyter-packaging==0.10.2

jupyter-server==1.7.0

jupyter-server-proxy==1.6.0

jupyter-telemetry==0.1.0

jupyterhub==1.4.2

jupyterlab==3.0.16

jupyterlab-pygments==0.1.2

jupyterlab-server==2.5.2

jupyterlab-widgets==1.0.0

nbconvert==5.5.0

nbformat==5.1.3

traitlets==4.3.3

Any assistance with troubleshooting this issue would be greatly appreciated. A lot of our users really like the Sparkmonitor! Please let me know if I can provide any other details about our setup that would be helpful. Thank you!