1: Build two images

docker build -t myos6 .

docker build -t myos6-hadoop-spark .

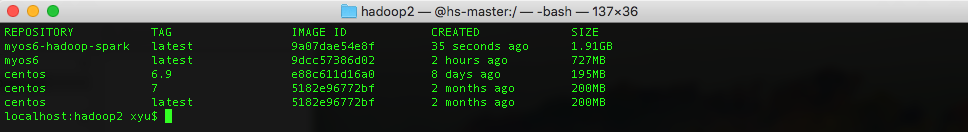

2: Check all images

docker images

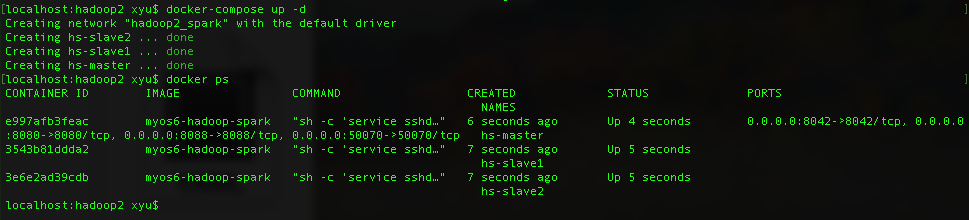

3: Start container

docker-compose up -d

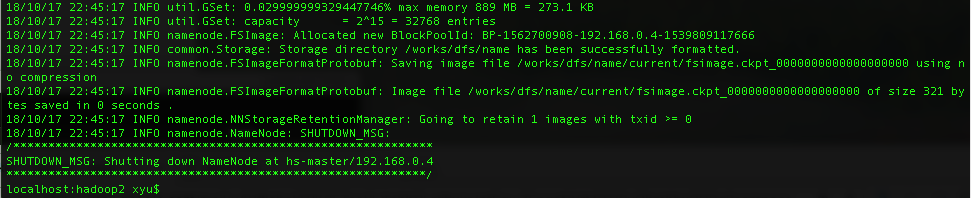

4: Format name node (at first time only)

docker-compose exec hadoop-spark-master hdfs namenode -format

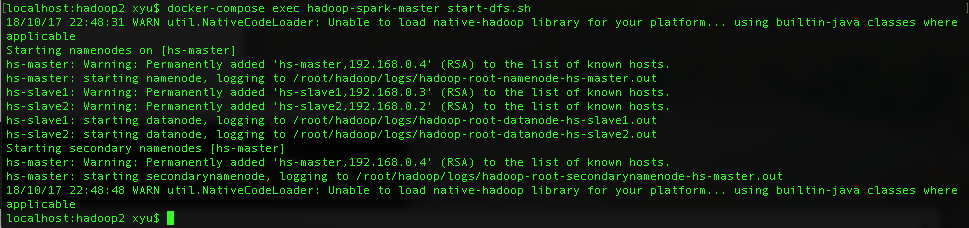

5: Start dfs

docker-compose exec hadoop-spark-master start-dfs.sh

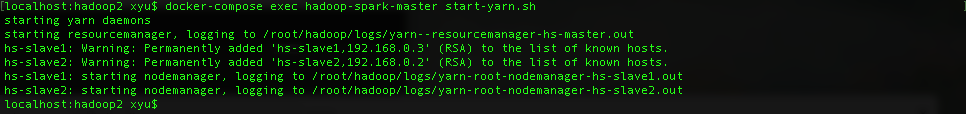

6: Start yarn

docker-compose exec hadoop-spark-master start-yarn.sh

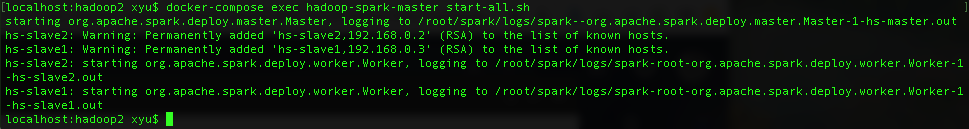

7: Start spark

docker-compose exec hadoop-spark-master start-all.sh

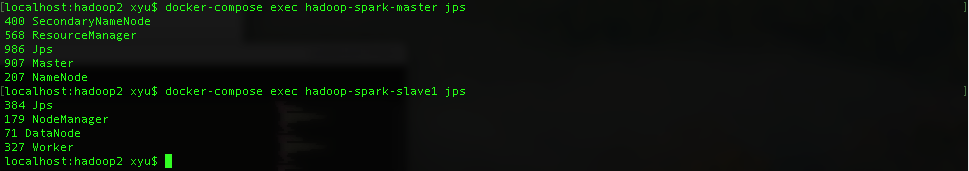

8: Check service status

docker-compose exec hadoop-spark-master jps

docker-compose exec hadoop-spark-slave1 jps

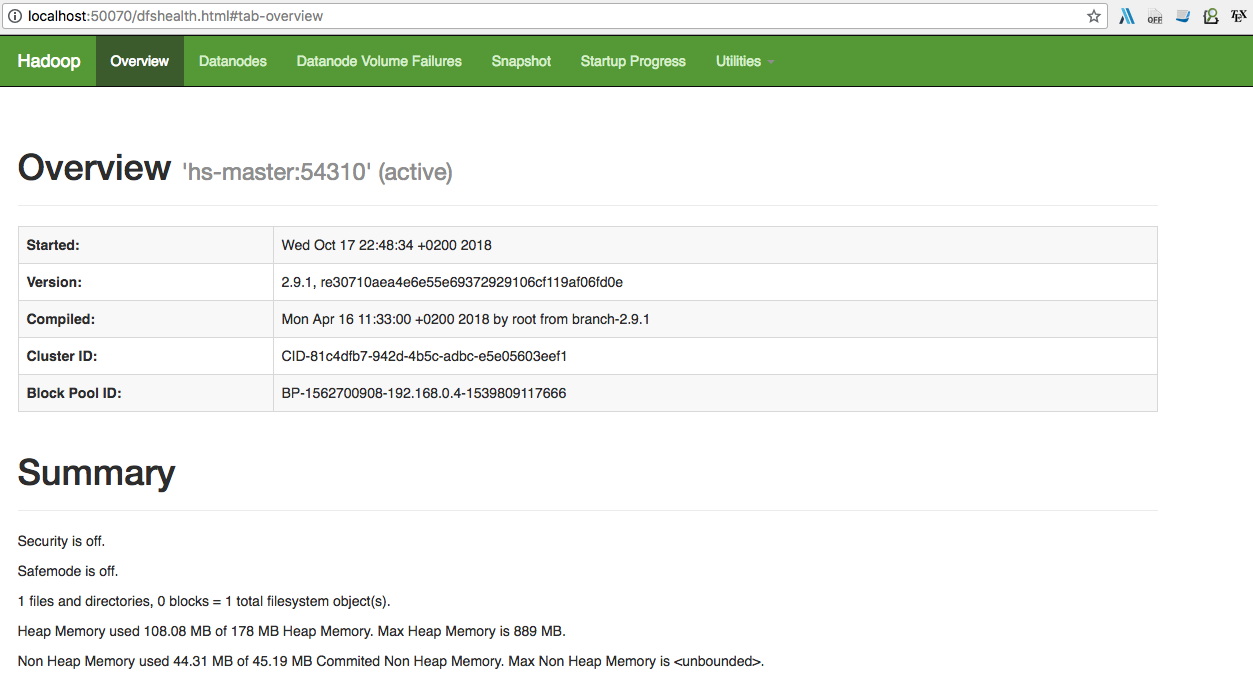

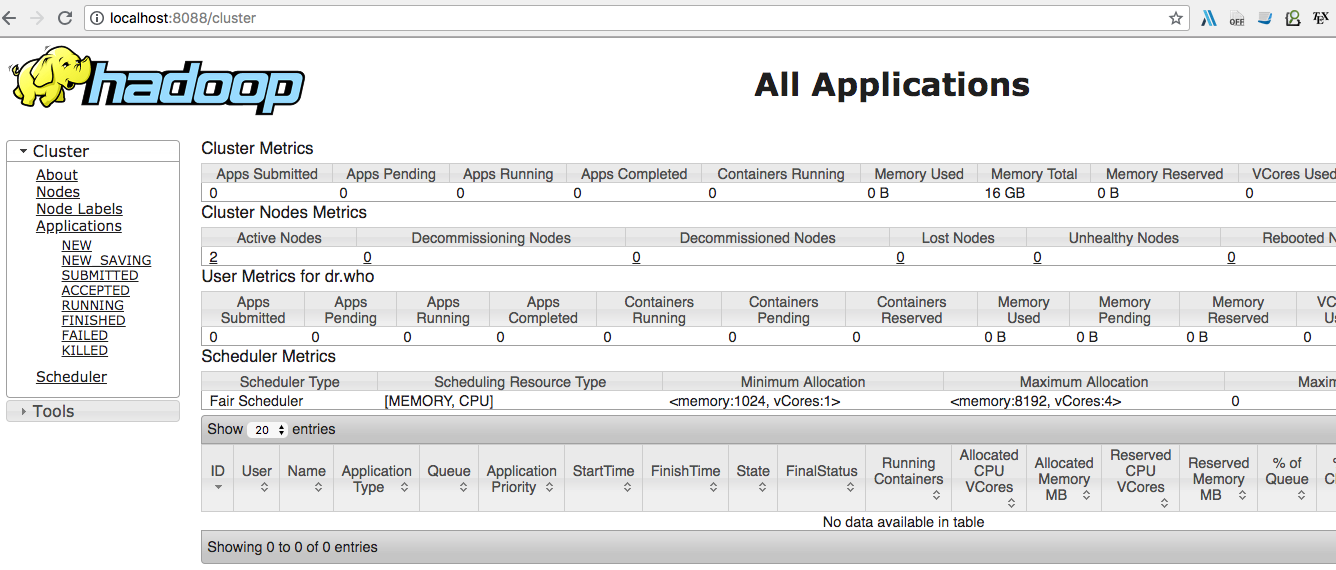

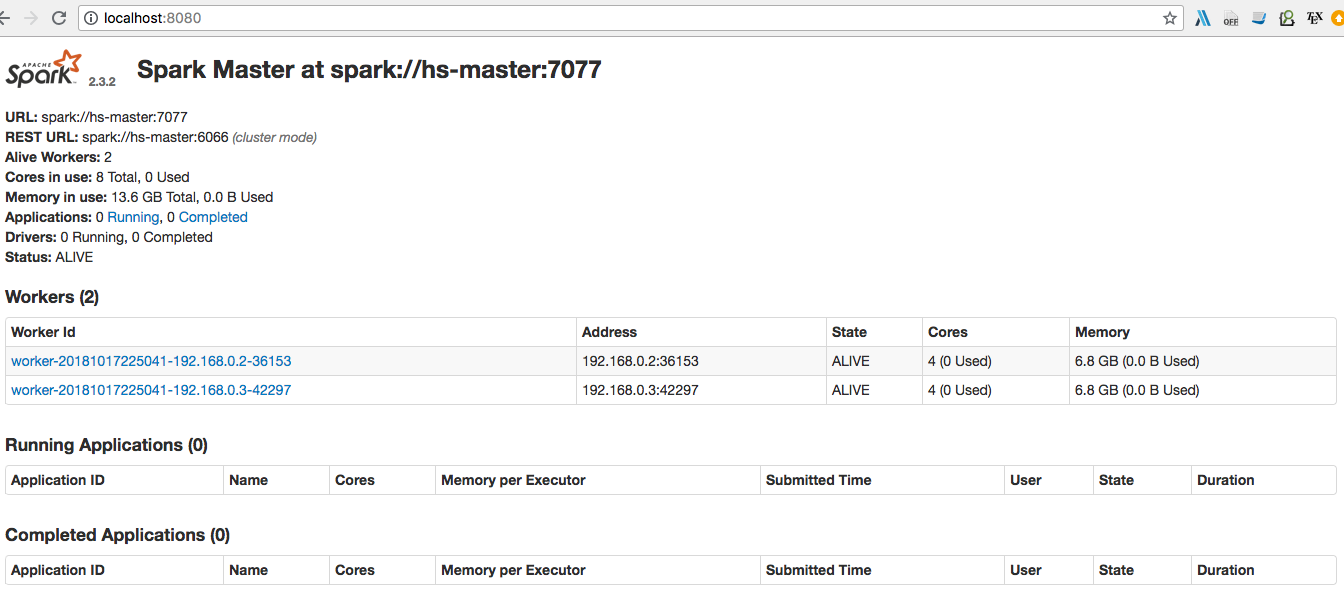

9: We can check also all services by web

http://localhost:50070

http://localhost:8088

http://localhost:8088

http://localhost:8080

http://localhost:8080

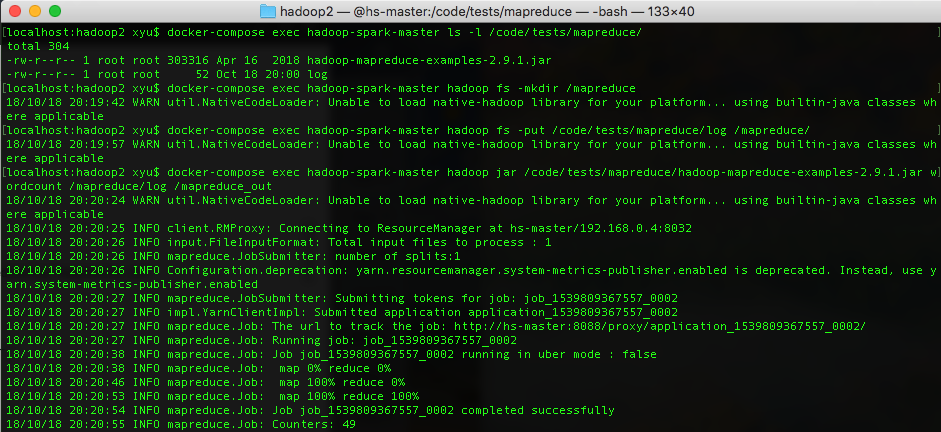

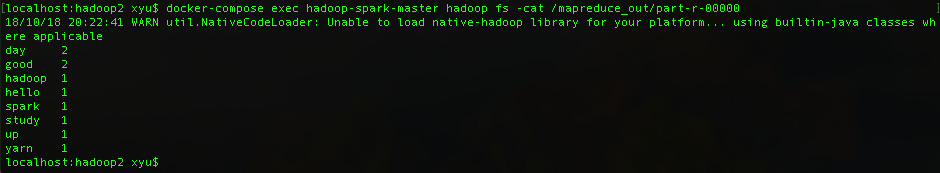

10: Test wordcount by Hadoop mapreduce

docker-compose exec hadoop-spark-master ls -l /code/tests/mapreduce/

docker-compose exec hadoop-spark-master hadoop fs -mkdir /mapreduce

docker-compose exec hadoop-spark-master hadoop fs -put /code/tests/mapreduce/log /mapreduce/

docker-compose exec hadoop-spark-master hadoop jar /code/tests/mapreduce/hadoop-mapreduce-examples-2.9.1.jar wordcount /mapreduce/log /mapreduce_out

docker-compose exec hadoop-spark-master hadoop fs -cat /mapreduce_out/part-r-00000

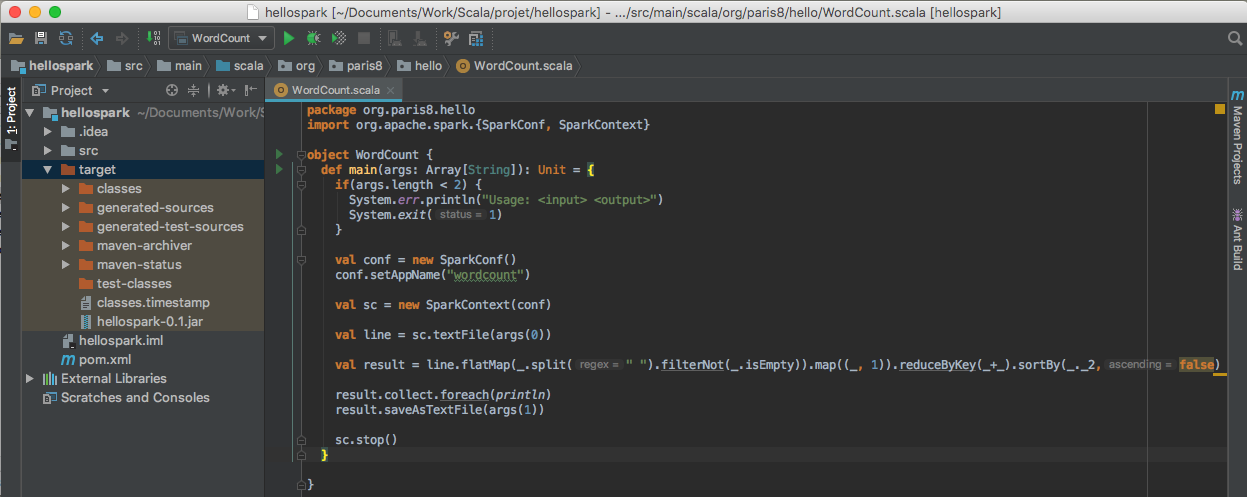

11: Create project spark and upload jar to server

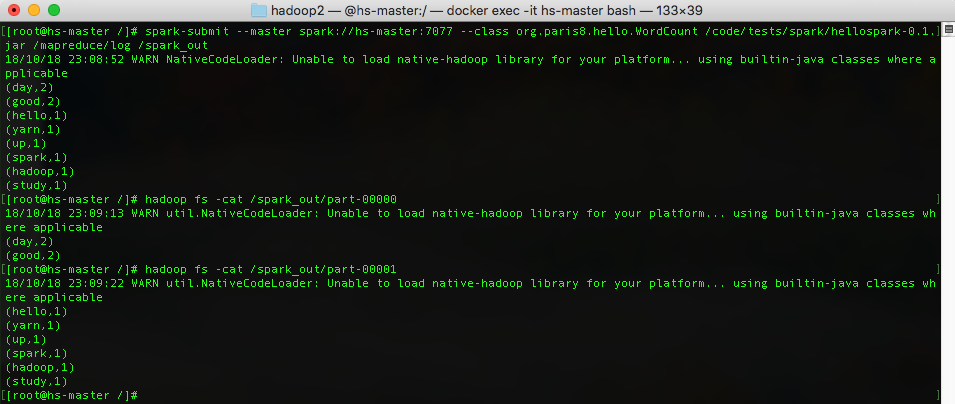

12: Test wordcount by Spark