This project is an implementation of DeepMind's DQN algorithm and its associated bag of tricks. It relies on TensorFlow and the OpenAI Gym. It currently includes the DQN and Double DQN algorithms. Most notably, prioritized experience replay is not yet implemented.

Python 3 or greater

TensorFlow 1.0 or greater

To train an agent (on Breakout by default):

> python dqn/train.py --name [name_for_this_run]All summaries, videos, and checkpoints will go to the results directory.

You can record vidoes using a trained model by running:

> python dqn/demo.pyTo customize a training or demo run (for example to use a different game), change the available settings in dqn/config.py.

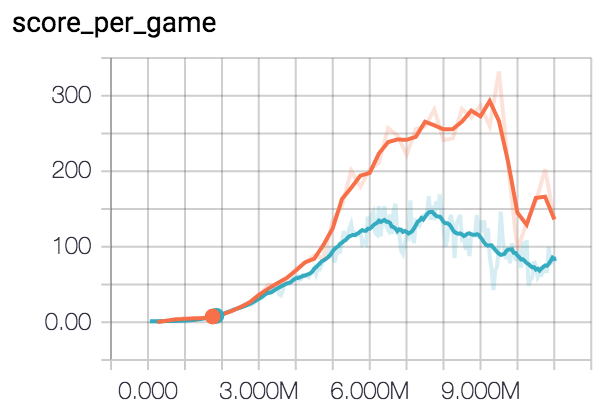

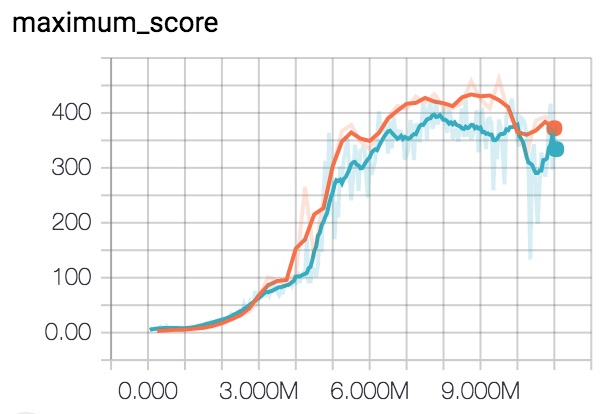

Since every run has a name, TensorBoard summaries are automatically written to a corresponding subdirectory under results/stats. Algorithmic variations can then be compared with graphical overlays in TensorBoard:

> tensorboard --logdir=results/statsRunning vanilla DQN on OpenAI Gym environment BreakoutDeterministic-v3