If constraint has large result set, fetching pagetree takes much longer than without constraints and may result in errors.

I am assuming the extension may be fetching too many results. In the core - to handle the previous performance problems in the page tree - not the entire pagetree is fetched - only the expanded part. (After several changes in the pagetree mechanism).

If I fetch with this extension "pagetreefilter" using a constraint with a large result set I get one of:

- Page tree error (error message)

- A spinner making the pagetree and the text field unresponsive so you can only abort with reload (actually a problem in the TYPO3 core, I think)

I still find the extension very nice and quite useful. The editors on our site will most likely not get this problem as they see only a much smaller subset of pages (due to the mount points used).

I am not using this yet in production, it might be nice to be able to find a way to solve these problems. Will upgrade to v11 soon.

System

I am using

- TYPO3 10.4.26 (latest)

- pagetreefilter 0.2.1 (latest for v10)

site has about 40 000 pages.

select count(*) from pages where sys_language_uid=0 and not deleted;

+----------+

| count(*) |

+----------+

| 40932 |

+----------+

Reproduce

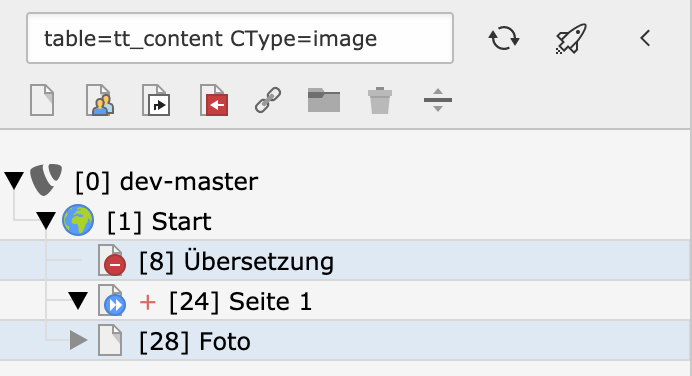

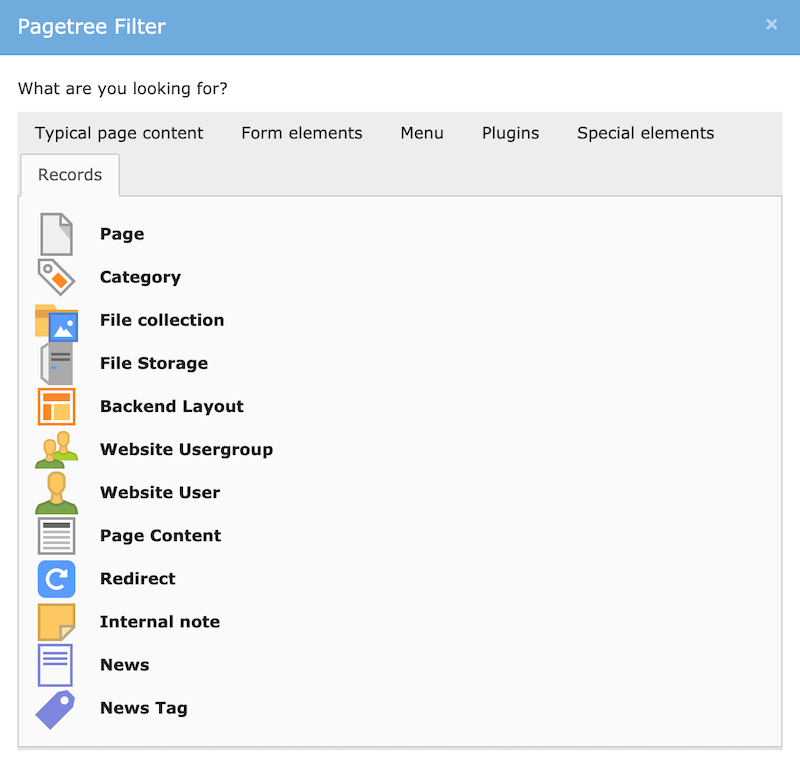

- use constraints such as

- table=pages

- table=pages doktype=1

Watching the result times in the Network tab of developer tools, I also see long response times for the filterData requests, e.g. - 29s

error messages

Browser console:

PageTree.js?bust=1648368554:486 Uncaught SyntaxError: Unexpected token < in JSON at position 0

at JSON.parse (<anonymous>)

at Object.<anonymous> (d3.js?bust=1648368554:8:28915)

at XMLHttpRequest.c (d3.js?bust=1648368554:6:16638)

No message in TYPO3 log (log file with ERROR log level).

This may be related to these core issues:

Possible solution

It might be a possible solution to get number of results first and if too large reject the constraint and fall back to original behaviour of TYPO3 or abort the current filter with an error message ("result set too large"). (Have not really thought this through though, just tossing out ideas. Difficulty is not just detemining what kind of result set is "too large").