beringresearch / lrfinder Goto Github PK

View Code? Open in Web Editor NEWLearning Rate Finder using Tensorflow Dataset

Learning Rate Finder using Tensorflow Dataset

Hey Lr_Finder Team,

First let me say great implementation of the lr range finder algorithm, it helped me a lot, but let me allow to share some issues I had with it.

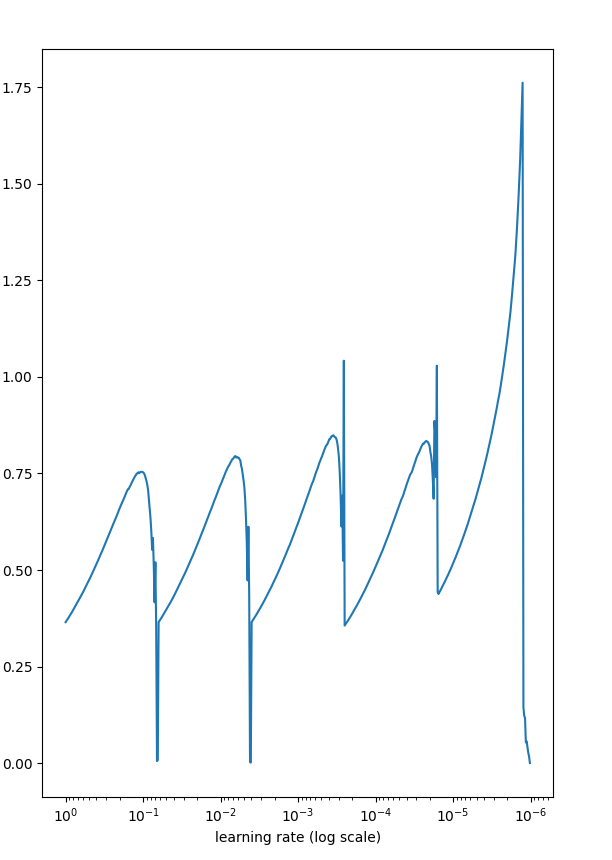

Since you adapt the learning rate after each batch the structure of the dataset has a huge impact on the loss-learning rate curve. In my case even with a shuffled dataset I got this periodic loss pattern (when I disabled the early stopping condition:

#if batch > 5 and (math.isnan(loss) or loss > self.best_loss * 4):

I show you what I mean:

and here with your default value of 5 epochs

It gets even worser if I increase the search interval:

When I searched for the best lr it resulted always in a huge different best_learning rates with some exponential factor to the power of 10.

But when I use the the whole epoch not only a subset of batch for loss calculation this results in that smoothed learning rate loss graph:

I also reseted the weights after each epoch, since the weights are getting better and better adapted to the problem over time and also influence the loss and this would result that the loss values might not be comparable.

in find method:

self.lr_mult = float(end_lr) / float(start_lr) ** (float(1) / float(epochs)) self.initial_weights = self.model.get_weights() callback = LambdaCallback(on_epoch_end=lambda epoch, logs: self.on_epoch_end(epoch, logs))

`

def on_epoch_end(self, epoch, logs):

# use the whole epoch instead of a small(er) batch since the

# subsequential selection of data might have an influence on the loss

# lr = K.get_value(self.model.optimizer.lr)

self.curr_lr = self.model.optimizer.lr.warm_lr.initial_learning_rate

loss = logs['loss']

self.losses.append(loss)

# if batch > 5 and (math.isnan(loss) or loss > self.best_loss * 4):

# print("stop training")

# self.model.stop_training = True

# return

if loss < self.best_loss:

self.best_loss = loss

self.curr_lr *= self.lr_mult

# lr.warm_lr.initial_learning_rate = self.curr_lr

self.learning_rates.append(self.curr_lr)

self.model.optimizer.lr.warm_lr.initial_learning_rate = self.curr_lr

# K.set_value(self.model.optimizer.lr, lr)

# reset weights after each epoch to erase the influence of better weights on the loss value

self.model.set_weights(self.initial_weights)

`

please note that I use a custom learning rate scheduler class, therefore my learning rate adaption code differs a little from yours.

Cheers.

A declarative, efficient, and flexible JavaScript library for building user interfaces.

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

An Open Source Machine Learning Framework for Everyone

The Web framework for perfectionists with deadlines.

A PHP framework for web artisans

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

Some thing interesting about web. New door for the world.

A server is a program made to process requests and deliver data to clients.

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

Some thing interesting about visualization, use data art

Some thing interesting about game, make everyone happy.

We are working to build community through open source technology. NB: members must have two-factor auth.

Open source projects and samples from Microsoft.

Google ❤️ Open Source for everyone.

Alibaba Open Source for everyone

Data-Driven Documents codes.

China tencent open source team.