Continous Control with Deep Reinforcement Learning for Unitree A1 Quadruped Robot

The goal of this project is to evaluate training methods for synthesising a control system for quadruped robot.

State-of-the-art approaches focus on Model-Predictive-Control (MPC). There are two main approaches to MPC: Model-Based and Model-Free. Model-Based MPC uses a model of the system to predict the future states of the system. Model-Free MPC uses a neural network to predict the future states of the system. The model-free approach is more flexible and can be used for systems that are not fully known. However, it is more difficult to train and requires a large amount of data.

There has been a lot of research on model-free synthesized control. This projects starts with established method Soft-Actor-Critic and tries to make an efficent reward function for control signals.

Usage

Pull repository with submodules:

git clone https://github.com/stillonearth/continuous_control-unitree-a1.git --depth 1

cd continuous_control-unitree-a1

git submodule update --init --recursive

pip install -r requirements.txtSoft-Actor-Critic

Soft Actor-Critic is model-free direct policy-optimization algorithm. It means it can be used in environment where no a-priori world and transition models are known, such as real world. Algorithm is sample-efficient because it accumulates (s,a,r,s') pairs in experience replay buffer. SAC implementation from stable_baselines3 was used in this work.

Training with control tasks

This differentiates from a previous goal as now control singal is supplied to a neural network. At each episode in training a random control task is sampled. This makes this algorithm similar to a meta-training approach such as REPTILE This is done by using the gradient of the each new task to update the single model.

Environments

1. Unitree Go-1 — Forward

This environment closely follows Ant-v4 from OpenAI Gym. The robot is rewarded for moving forward and keeping it's body within a certain range of z-positions.

2. Unitree Go-1 — Control

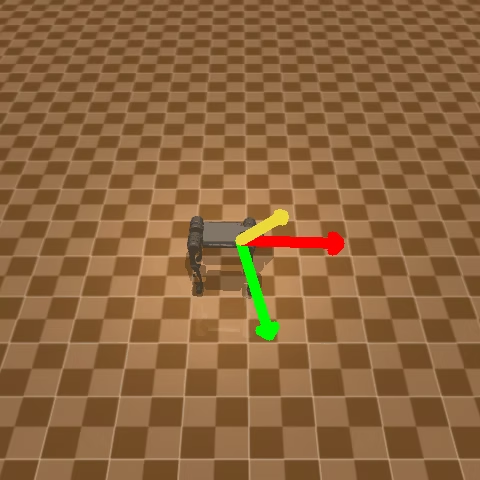

This environment also adds 2 control signals to robot's observations: velocity direction, and body orientation. The robot is rewarded for following control signals.

Results

Logs are included in logs/sac folder.

Policies after 2M episodes

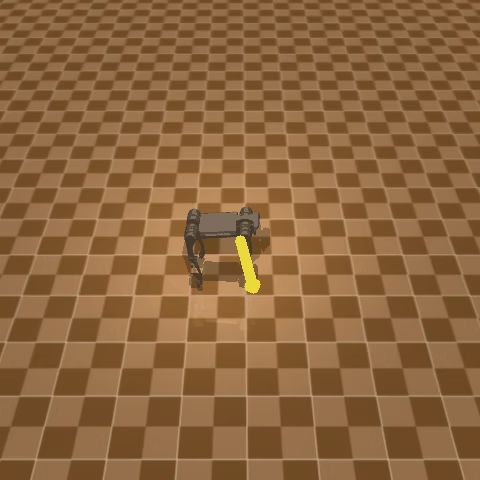

Control — Direction

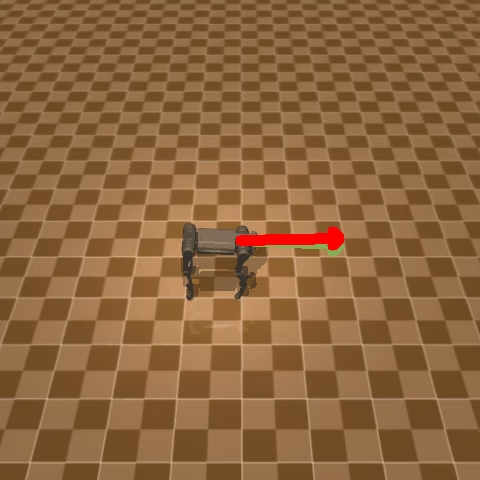

Control — Orientation

Control — Orientation + Direction

Trained for 25M episodes:

These are not state-of-the art results but can be used for futher iterating on performance of model.