I was thinking about "the importance of having a coordinate system independent

of what the source files offer" which @dirkroorda mentioned the other day, and

which was also described in the Unlocking Digital Texts Position

Paper:

From these formats, it is definitely possible to introduce a “glyph1-level” fragment addressing

scheme, comprising an offset from the start of the file. This effectively reduces all text

formats to plain-text by stripping away any additional tagging and non-textual components.

This is not an entirely trivial exercise, since some additional complexities around Unicode

normalisation rules and white-space handling will need to be dealt with, in order to ensure

that plain-text conversions are carried out in a consistent manner.

However, at this stage, it appears that it would be advantageous to also have a

higher level scheme that operates in a more “human-friendly” way, with word (or token)

granularity and some sense of semantic structure at a level similar to Markdown or a

light-TEI schema.

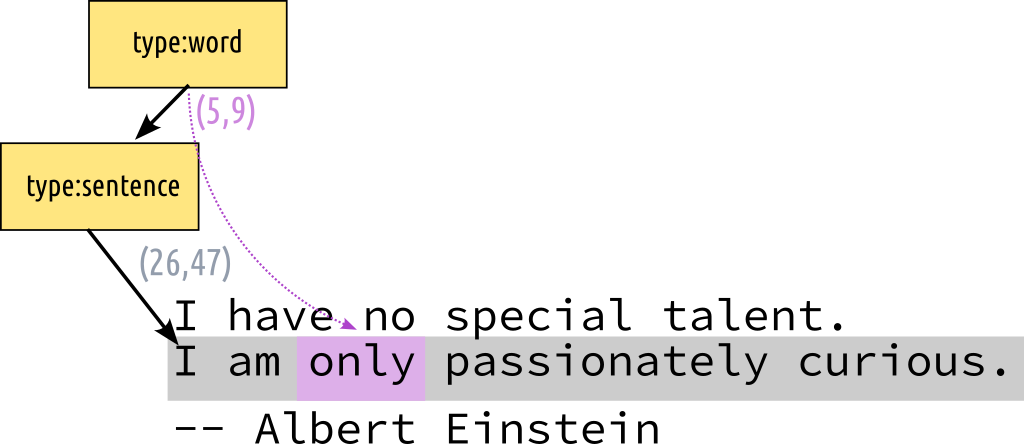

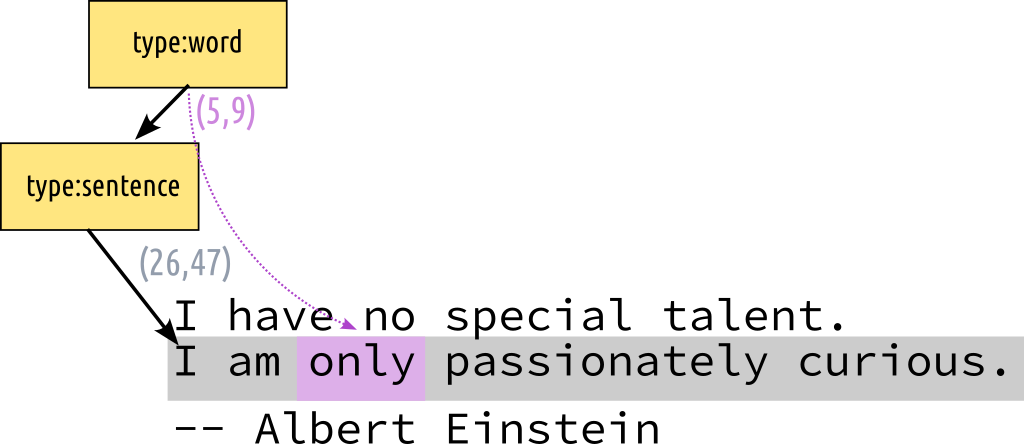

How does this relate to STAM? We have higher-order annotations that allows modelling higher-level schemes.

We can annotate a sentence and then annotate a word in that sentence using relative offsets:

The offsets still refer to the unicodepoint level, but no longer relative to

the resource as a whole but to the annotation that is being pointed at (the

sentence in this case).

The recent proposal for the STAM Baseoffset

extension is also relevant in this

because it allows us to use a start/base offset that deviates from the actual

text (a simple decoupling from the actual coordinate system, though the units

are still the same).

Next we also have our CompositeSelector (and MultiSelector) that would let us

model things the other way round, we can have the sentence be the higher-order

annotation and have it point annotations that are words, and those in turn point to the resource

(using offsets).

At this point a question arises of something we can't model in STAM yet. Our

offsets are always unicode points (as that's our most atomic unit). If you want

to address things at a higher level like described in the previous paragraph

then that requires explicitly enumerating all the targets in a

CompositeSelector/MultiSelector/DirectionalSelector. But what if we want to use

offsets in another coordinate system here? Say a selector that selects the

second up to the ninth word? Do we want a selector that can express this

whilst automatically interpolating the points in between?

Adding something like that should be possible and adds more flexibility to how

people can use STAM for modelling, but it comes at the cost of adding further

complexity to STAM. So probably it should be an extension.

Eventually we could even go as far as have a universal Selector that points to

something (resource/annotation) that is the result of a whole query. That might

subsume the above use-case as well, but would rely on several extensions (most

notably the query system which will be upcoming anyway) that are not trivial.

Text-Fabric and FoLiA both rely in the core on a coordinate system

more detached from the text, in both a text is merely an annotation like anything else.

The situation in STAM is a bit different, almost everything is an annotation

but the text itself is the primary thing an annotation points to (a slice

thereof), either directly or indirectly. I do think that's the proper method

for a standoff text annotation model.

Last, a word about complex selectors like those in the Web Annotation model

which can reference XPath and other complex file formats. I do consider these

explicitly out of scope for STAM. We want to untangle text and annotations

completely, so text is its most bare form (plain text, utf-8) and all

annotations reference that, rather than some hybrid.

I just wanted to throw all this out here to voice and hear some thoughts and if

needed have some discussion, I'm especially interested in what @dirkroorda

thinks.