- Uploading and storing the United States Geological Survey's Earthquake data to the could with http API access.

-> Click here for Video Presentation <-

- In this project I wanted to ingest JSON data into cloud storage using AWS dynamodb.

- I created an HTTP API to access the data.

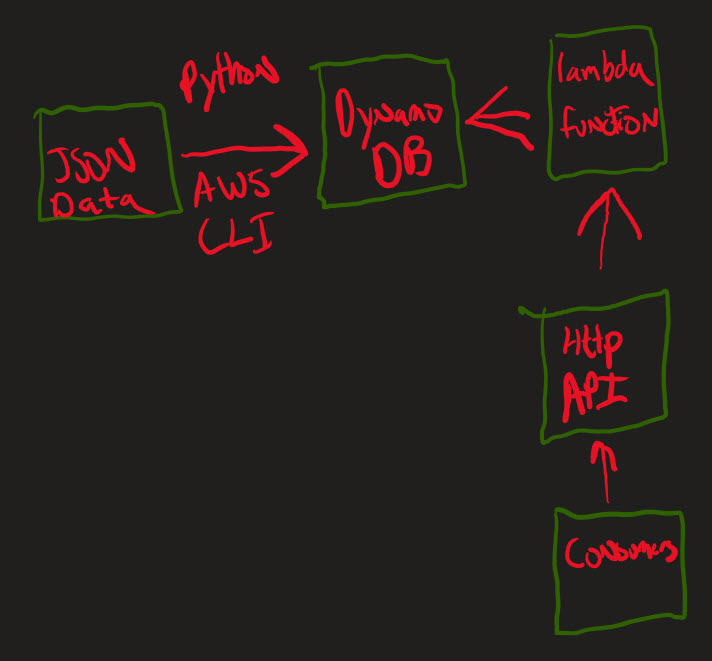

- I created a diagram of the pipeline to get a plan of how to build it and what tools I would need to incorporate.

- The web data comes from USGS EARTHQUAKE API.

- This powerful API allows users to query the Earthquake database in different ways, including by date.

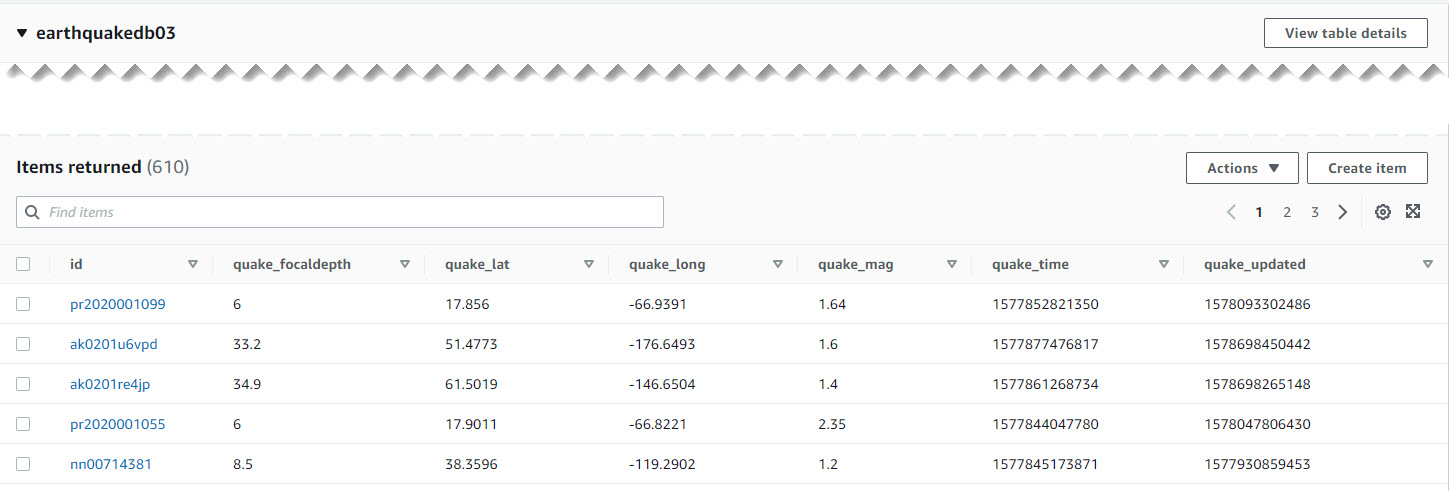

- The data utilized was generated by querying all earthquakes from 2020-01-01 to 2020-01-02, this returned 610 events.

- The following attributes were selected for ingestion into the database: id, magnitude, focaldepth, latitude, longitude, time, update_time

- Python, AWS CLI, DynamoBD, Lambda, API HTTP Gateway

- After setting my request parameters to the dates I wanted, I downloaded the raw json file to my computer.

- The JSON data was read using the JSON library and the payload was extracted into variables.

- A connection to the DynamoDB database was made via the boto3 library and the AWS command line interface.

- Finally, within the python code the put.item() method populated the items stored within the Earthquake Table.

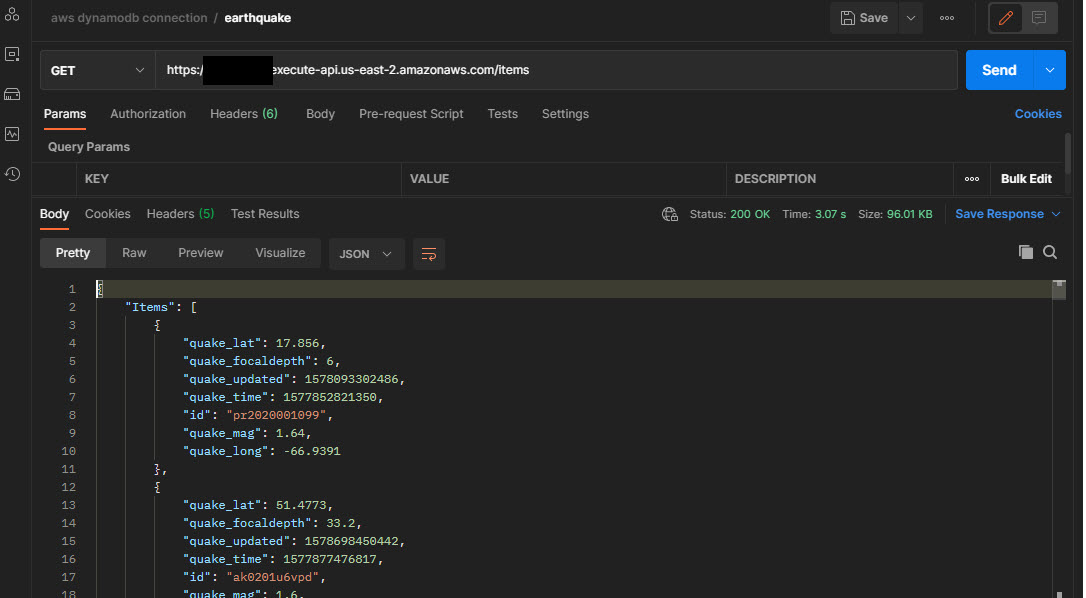

- An HTTP API was chosen due to it's flexibility to be accessed by different consumers, platforms, and technologies

- I created an HTTP API, routes, integrate the routes with the lambda function.

- Testing the API in Postman shows GET method working with the Table.

- Getting boto3 to ingest float data types was a struggle. I pass the json.dumps method requires a parse as decimal command.

- Accessing JSON nested data structures using python loops.

- This pipeline project demonstrates a way to store JSON data into a Dynamo Database and attached an HTTP API for consumption.