Comments (18)

I had this problem on my GTX 970. I tried adding this code to preload the models before generating audio:

preload_models(text_use_gpu=False, text_use_small=True, coarse_use_gpu=False, coarse_use_small=True, fine_use_gpu=False, fine_use_small=True, codec_use_gpu=False)

But it still gave me a CUDA out of memory error, even though I said to only use the CPU. Turns out, the generate_audio function doesn't respect the values you pass to preload_models... unless the models are the same size as generate_audio defaults to.

You need to set the environment variable SET SUNO_USE_SMALL_MODELS=True before you import bark. I ended up setting it in the batch file I use to start my program. Then it will use small models AND it will respect the use_gpu values you pass into preload_models.

You should be able to load some of the small models on the GPU, even if you can't load all of them on GPU, but I'm tired so I'll have to test that tomorrow.

from bark.

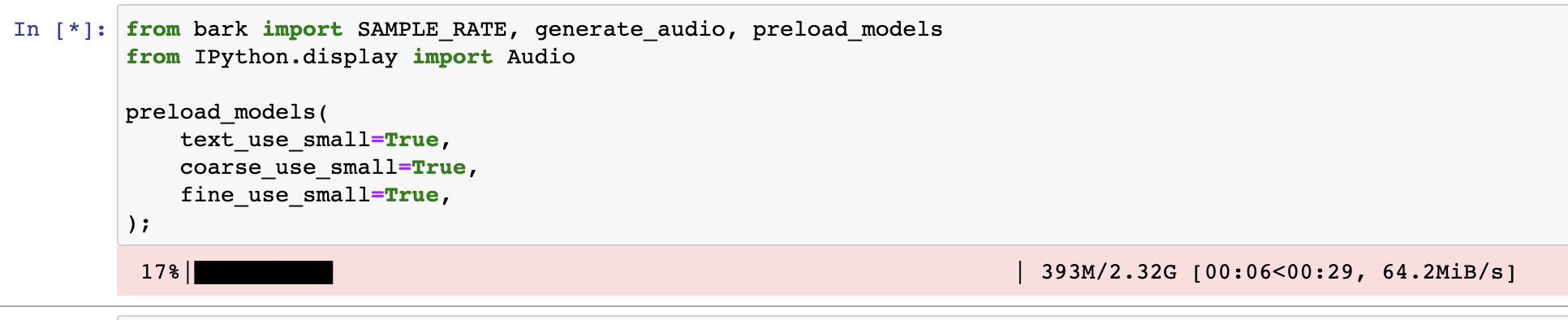

from bark import SAMPLE_RATE, generate_audio, preload_models

from IPython.display import Audio

#import gc

#gc.collect()

#torch.cuda.empty_cache()

# download and load all models

#preload_models()

preload_models(

text_use_small=True,

coarse_use_small=True,

fine_use_gpu=True,

fine_use_small=True,

)

# generate audio from text

text_prompt = """

Hello, my name is Suno. And, uh — and I like pizza. [laughs]

But I also have other interests such as playing tic tac toe.

"""

audio_array = generate_audio(text_prompt)

# play text in notebook

Audio(audio_array, rate=SAMPLE_RATE)ran the above. Got the error below:

OutOfMemoryError: CUDA out of memory. Tried to allocate 44.00 MiB (GPU 0; 3.82 GiB total capacity; 2.40 GiB already allocated; 30.75 MiB free; 2.54 GiB reserved in total by PyTorch) If reserved memory is >> allocated memory try setting max_split_size_mb to avoid fragmentation. See documentation for Memory Management and PYTORCH_CUDA_ALLOC_CONF

I then set: export PYTORCH_CUDA_ALLOC_CONF=garbage_collection_threshold:0.6,max_split_size_mb:21

and then the code was able to run with another ipython main.py.

Note the fine_use_gpu=True,

from bark.

tried on my 4g gpu, oh man, it worked! thanks

from bark.

got the same problem !

from bark.

Same problem on 360ti with 8gb vram while loading the model on my local install. Works fine for me on colab though for some reason

from bark.

try using the small models. set the environment variable USE_SMALL_MODELS="True"

from bark.

Like this?:

import os

os.environ["SUNO_USE_SMALL_MODELS"] = "True"

from bark.

Yup that should do it

from bark.

Still got the same error:

torch.cuda.OutOfMemoryError: CUDA out of memory. Tried to allocate 12.00 MiB (GPU 0; 8.00 GiB total capacity; 7.30 GiB already allocated; 0 bytes free; 7.33 GiB reserved in total

by PyTorch) If reserved memory is >> allocated memory try setting max_split_size_mb to avoid fragmentation. See documentation for Memory Management and PYTORCH_CUDA_ALLOC_CONF

This is the code I am using:

from bark import SAMPLE_RATE, generate_audio, preload_models

import os

os.environ["SUNO_USE_SMALL_MODELS"] = "True"

preload_models()

from bark.

Any fix for this?

from bark.

Yup that should do it

doesn't work for me, same error

from bark.

made a new update where you can control model size and location (gpu/cpu) directly on load, like this for example:

preload_models(

text_use_small=True,

coarse_use_small=True,

fine_use_gpu=False,

fine_use_small=True,

)

from bark.

anyone tried this ?? how did it go?

from bark.

from bark import SAMPLE_RATE, generate_audio, preload_models

from IPython.display import Audio

download and load all models

preload_models(

text_use_small=True,

coarse_use_small=True,

fine_use_gpu=False,

fine_use_small=True,

)

generate audio from text

text_prompt = """

Hello, my name is Suno. And, uh — and I like pizza. [laughs]

But I also have other interests such as playing tic tac toe.

"""

audio_array = generate_audio(text_prompt)

play text in notebook

Audio(audio_array, rate=SAMPLE_RATE)

from bark.

It appears the script does not remove the cache. First time, I was able to get the script running. I ran the exact same script and get the following:

torch.cuda.OutOfMemoryError: CUDA out of memory. Tried to allocate 20.00 MiB (GPU 0; 3.82 GiB total capacity; 2.75 GiB already allocated; 20.00 MiB free; 2.88 GiB reserved in total by PyTorch) If reserved memory is >> allocated memory try setting max_split_size_mb to avoid fragmentation. See documentation for Memory Management and PYTORCH_CUDA_ALLOC_CONF

No changes to the code or env. If I were to guess, it appears the memory is not being cleared on CUDA. Script is very cool though! Thanks for providing the source on this!

from bark.

You need to set the environment variable

SET SUNO_USE_SMALL_MODELS=Truebefore you import bark. I ended up setting it in the batch file I use to start my program. Then it will use small models AND it will respect the use_gpu values you pass into preload_models.

Seconding this. Without setting the environment variable before importing bark, the smaller models will not download unless you change the preload_models() arguments instead.

from bark.

hmmm, definitely downloads the small version for me...

from bark.

They do when calling preload_models with those options. I was referring to when only using the env var.

from bark.

Related Issues (20)

- ModuleNotFoundError while trying to load entry-point bdist_wheel: No module named '_ctypes'

- ImportError: cannot import name 'AutoProcessor' from partially initialized module 'transformers' (most likely due to a circular import) HOT 1

- Links of live examples of bark or chirp do not work in readme.md

- How to make it work directly from C# without Python?

- Bark Voice clone doesnt work fresh after installed HOT 1

- Bark

- persian

- GPU/cuda available, but GPU not used? HOT 3

- Issue using history_prompt HOT 1

- How to add music note to lyrics? HOT 2

- RuntimeError: PytorchStreamReader failed reading zip archive: failed finding central directory

- Can Bark support acceleration with AMD GPU with ROCM? HOT 2

- How to stop the repeated downloads while running example in README HOT 1

- Generated audio is not at all what I wrote in the prompt, unreliable results HOT 1

- Track Creation Fails with "Credits Refunded" After Extension and Refresh

- post-punk genre is not created HOT 1

- Suno v3 HOT 1

- CUDNN_STATUS_NOT_SUPPORTED HOT 1

- Voyager Legacy records

- python bark_webui.py not working HOT 2

Recommend Projects

-

React

React

A declarative, efficient, and flexible JavaScript library for building user interfaces.

-

Vue.js

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

-

Typescript

Typescript

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

-

TensorFlow

An Open Source Machine Learning Framework for Everyone

-

Django

The Web framework for perfectionists with deadlines.

-

Laravel

A PHP framework for web artisans

-

D3

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

-

Recommend Topics

-

javascript

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

-

web

Some thing interesting about web. New door for the world.

-

server

A server is a program made to process requests and deliver data to clients.

-

Machine learning

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

-

Visualization

Some thing interesting about visualization, use data art

-

Game

Some thing interesting about game, make everyone happy.

Recommend Org

-

Facebook

We are working to build community through open source technology. NB: members must have two-factor auth.

-

Microsoft

Open source projects and samples from Microsoft.

-

Google

Google ❤️ Open Source for everyone.

-

Alibaba

Alibaba Open Source for everyone

-

D3

Data-Driven Documents codes.

-

Tencent

China tencent open source team.

from bark.