Comments (8)

You means that "it is forbidden by the Terms of Service (ToS) of OpenAI ChatGPT". Thank you for your response to this issue.

Maybe the open source community can have other ways to train chatGPT, especially the RL Human Feedback part in Step 2.

Using NEOX for RLAIF as a substitute for RLHF may be a plausible solution. Anthropic showed promising results with synthetic data generation. The nonprofit Ought was able to successfully train a reward model with RLAIF for summarization with NEO (1.3b).

I am working with CarperAI and a small group to open-source a few datasets as part of a bigger project relating to this. Harrison Chase and John Nay of LangChain also offered to help. We plan to generate synthetic data for different tasks relating to SFT, RLAIF, CoT, and training the reward models.

from palm-rlhf-pytorch.

from palm-rlhf-pytorch.

You means that "it is forbidden by the Terms of Service (ToS) of OpenAI ChatGPT".

Thank you for your response to this issue.

Maybe the open source community can have other ways to train chatGPT, especially the RL Human Feedback part in Step 2.

from palm-rlhf-pytorch.

from palm-rlhf-pytorch.

Please check this

https://github.com/LAION-AI/Open-Assistant

from palm-rlhf-pytorch.

from palm-rlhf-pytorch.

It is possibale to use the ChatGPT of OpenAI to train our own ChatGPT.

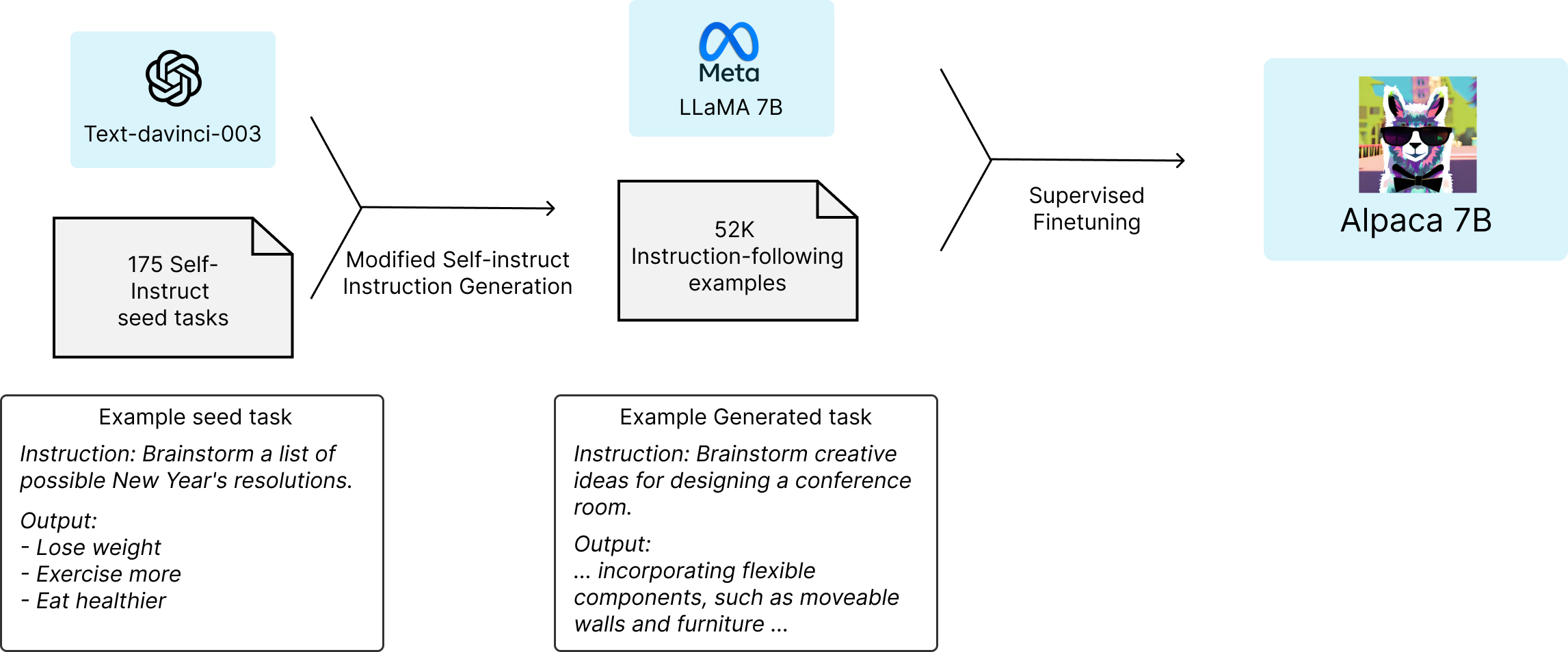

The figure below illustrates how we obtained the Alpaca model. For the data, we generated instruction-following demonstrations by building upon the self-instruct method. We started with the 175 human-written instruction-output pairs from the self-instruct seed set. We then prompted text-davinci-003 to generate more instructions using the seed set as in-context examples. We improved over the self-instruct method by simplifying the generation pipeline (see details in GitHub) and significantly reduced the cost. Our data generation process results in 52K unique instructions and the corresponding outputs, which costed less than $500 using the OpenAI API.

https://crfm.stanford.edu/2023/03/13/alpaca.html

https://github.com/tatsu-lab/stanford_alpaca

from palm-rlhf-pytorch.

It is possibale to use the ChatGPT of OpenAI to train our own ChatGPT.

The figure below illustrates how we obtained the Alpaca model. For the data, we generated instruction-following demonstrations by building upon the self-instruct method. We started with the 175 human-written instruction-output pairs from the self-instruct seed set. We then prompted text-davinci-003 to generate more instructions using the seed set as in-context examples. We improved over the self-instruct method by simplifying the generation pipeline (see details in GitHub) and significantly reduced the cost. Our data generation process results in 52K unique instructions and the corresponding outputs, which costed less than $500 using the OpenAI API.

Thanks a lot for your insightful sharing :)

Can you explain please how the method you used for training is compatible with ChatGPT and LLAMA 2 ToS?

from palm-rlhf-pytorch.

Related Issues (20)

- Value function

- Can not train the model using PyTorch version 2? HOT 1

- train your reward model issue HOT 1

- KL divergence loss HOT 1

- mask raised error HOT 2

- Confusion about KL divergence calculation for human feedback policies HOT 13

- Reason for using pooled critic embedding instead of the last embedding for value head HOT 3

- Calculating the kl loss seems has a mistake. HOT 1

- Column and Row Parallel Linear for Apex Tensor Parallel HOT 1

- i use other params with palm, but got error HOT 4

- norm.gamma not used during backprop HOT 2

- speed up with flash attn in A6000? HOT 2

- memory-efficient attention is default opened? if i dont use flash attn HOT 3

- Model Name HOT 3

- I looked at the llama source code and there is an intermedie layer

- Flash Attention 2

- Possible incorrect creation of Rotary Embeddinigs HOT 1

- Should critic's input be prompt only?

- How to use lora?

- Is there any documentation to train this on my own data ?

Recommend Projects

-

React

React

A declarative, efficient, and flexible JavaScript library for building user interfaces.

-

Vue.js

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

-

Typescript

Typescript

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

-

TensorFlow

An Open Source Machine Learning Framework for Everyone

-

Django

The Web framework for perfectionists with deadlines.

-

Laravel

A PHP framework for web artisans

-

D3

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

-

Recommend Topics

-

javascript

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

-

web

Some thing interesting about web. New door for the world.

-

server

A server is a program made to process requests and deliver data to clients.

-

Machine learning

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

-

Visualization

Some thing interesting about visualization, use data art

-

Game

Some thing interesting about game, make everyone happy.

Recommend Org

-

Facebook

We are working to build community through open source technology. NB: members must have two-factor auth.

-

Microsoft

Open source projects and samples from Microsoft.

-

Google

Google ❤️ Open Source for everyone.

-

Alibaba

Alibaba Open Source for everyone

-

D3

Data-Driven Documents codes.

-

Tencent

China tencent open source team.

from palm-rlhf-pytorch.