Comments (6)

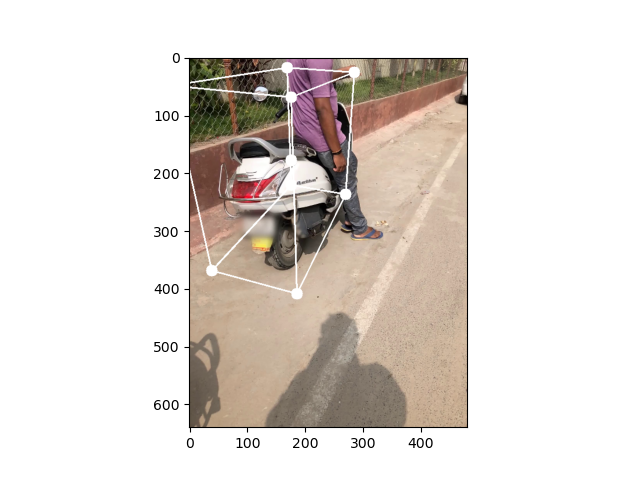

Hi, I think I figured out how to use the intrinsic matrix to project 3D points. See project_by_intrinsics(). The difficult part is the frame transformation between Objectron frame (x down, y right, z in) and Projection in the OpenCV/H-Z framework (x right, y down, z out). Additionally, Objectron intrinsic matrix has px and py swapped because it is in portrait mode (as pointed out here) so we have to swap x and y.

import hub

import matplotlib.pyplot as plt

import cv2

import numpy as np

def draw_box(img, arranged_points, save_path):

"""

plot arranged_points on img and save to save_path.

arranged_points is in image coordinate. [[x, y]]

"""

RADIUS = 10

COLOR = (255, 255, 255)

EDGES = [

[1, 5], [2, 6], [3, 7], [4, 8], # lines along x-axis

[1, 3], [5, 7], [2, 4], [6, 8], # lines along y-axis

[1, 2], [3, 4], [5, 6], [7, 8] # lines along z-axis

]

for i in range(arranged_points.shape[0]):

x, y = arranged_points[i]

cv2.circle(

img,

(int(x), int(y)),

RADIUS,

COLOR,

-10

)

for edge in EDGES:

start_points = arranged_points[edge[0]]

start_x = int(start_points[0])

start_y = int(start_points[1])

end_points = arranged_points[edge[1]]

end_x = int(end_points[0])

end_y = int(end_points[1])

cv2.line(img, (start_x, start_y), (end_x, end_y), COLOR, 2)

plt.imshow(img)

plt.savefig(save_path)

plt.close()

def project_by_intrinsics(element):

"""

Project using camera intrinsics.

reference

https://github.com/google-research-datasets/Objectron/issues/39#issuecomment-835509430

https://amytabb.com/ts/2019_06_28/

Objectron frame (x down, y right, z in);

H-Z frame (x right, y down, z out);

Objectron intrinsics has px and py swapped;

px and py are from original image size (1440, 1920);

Approach 1:

To transform objectron frame to H-Z frame,

we need to z <-- -z and swap x and y;

To modify intrinsics, we need swap px, py.

Or alternatively, approach 2:

we change the sign for z and swap x and y after projection.

"""

vertices_3d = element['point_3d'].reshape(9,3)

# objectron frame to H-Z frame

vertices_3d[:,2] = -vertices_3d[:,2]

intr = element['camera_intrinsics'].reshape(3,3)

# scale intrinsics from (1920, 1440) to (640, 480)

intr[:2, :] = intr[:2, :] / np.array([[1920],[1440]]) * np.array([[640],[480]])

point_2d = intr @ vertices_3d.T

point_2d[:2,:] = point_2d[:2,:] / point_2d[2,:]

# landscape to portrait swap x and y.

point_2d[[0,1],:] = point_2d[[1,0],:]

arranged_points = point_2d.T[:,:2]

return arranged_points

def project_by_camera_projection(element):

"""

Reference: https://github.com/google-research-datasets/Objectron/blob/master/notebooks/objectron-geometry-tutorial.ipynb

http://www.songho.ca/opengl/gl_projectionmatrix.html

function project_points

"""

vertices_3d = element['point_3d'].reshape(9,3)

vertices_3d_homg = np.concatenate((vertices_3d, np.ones_like(vertices_3d[:, :1])), axis=-1).T

vertices_2d_proj = np.matmul(element['camera_projection'].reshape(4,4), vertices_3d_homg)

# Project the points

points2d_ndc = vertices_2d_proj[:-1, :] / vertices_2d_proj[-1, :]

points2d_ndc = points2d_ndc.T

# Convert the 2D Projected points from the normalized device coordinates to pixel values

x = points2d_ndc[:, 1]

y = points2d_ndc[:, 0]

pt2d = np.copy(points2d_ndc)

pt2d[:, 0] = (1 + x) / 2 * element['image_width']

pt2d[:, 1] = (1 + y) / 2 * element['image_height']

arranged_points = pt2d[:,:2]

return arranged_points

def project_by_point2d(element):

"""

Reference: https://app.activeloop.ai/google/bike

function get_bbox

"""

arranged_points = element['point_2d'].reshape(9,3)

arranged_points[:,0] = arranged_points[:,0] * element['image_width']

arranged_points[:,1] = arranged_points[:,1] * element['image_height']

return arranged_points[:,:2]

if __name__ == '__main__':

frame_ids = [0]

for idx in frame_ids:

bikes = hub.Dataset("google/bike")

element=bikes[idx].compute()

pt2d_point2d = project_by_point2d(element.copy())

draw_box(element['image'].copy(), pt2d_point2d, 'point2d.png')

pt2d_cam_proj = project_by_camera_projection(element.copy())

draw_box(element['image'].copy(), pt2d_cam_proj, 'camera_projection.png')

pt2d_intr = project_by_intrinsics(element.copy())

draw_box(element['image'].copy(), pt2d_intr, 'camera_intrinsics.png')from objectron.

Hi DeriZSY, If you want to use the 3x3 camera intrinsic matrix, you can parse it from the a_r_capture_metadata_pb2 proto using the below line from the objectron-geometry-tutorial:

intrinsics = np.array(data.camera.intrinsics).reshape(3, 3)

For the 4x4 view_matrix, I am guessing you were referring to the projection_matrix that we used for projecting the points from 3D to 2D in our code. It's an approach that is often used by computer graphics folks, which is pretty much the counterpart of camera intrinsic matrix that is popular in 3D geometry community. For more details see this OpenGL tutorial.

from objectron.

Hi DeriZSY, If you want to use the 3x3 camera intrinsic matrix, you can parse it from the a_r_capture_metadata_pb2 proto using the below line from the objectron-geometry-tutorial:

intrinsics = np.array(data.camera.intrinsics).reshape(3, 3)For the 4x4

view_matrix, I am guessing you were referring to theprojection_matrixthat we used for projecting the points from 3D to 2D in our code. It's an approach that is often used by computer graphics folks, which is pretty much the counterpart of camera intrinsic matrix that is popular in 3D geometry community. For more details see this OpenGL tutorial.

Hi, thanks for the reply. You are right about the matrix part. I know that I can obtain intrinsics from the data. In the annotation for each frame, 3D box corner points (plus a center point) in the camera frame are provided and can be projected to 2D using projection_matrix .

My problem is that, how should I modify the 3D point annotation, so that I can project it with intrinsics instead of projection_matrix in the traditional way of computer vision, i.e. pts2d = K * pts3d?

To solve for the object pose, the usual way is to estimate the 2D corner and center points with a CNN, then solve for PnP using the estimated 2D points, 3D corner points, and intrinsic matrix. Google Media pipe use exactly this approach according to the paper.

How would you make it work, if the 3D points annotation can only be projected with projection_matrix instead of intrinsics? (I'd be glad to know if there is a way to work around)

from objectron.

Projection matrix and intrinsic matrix are basically the same. Projection matrix maps 3D point to an image plane of [-1, 1] and principal point of 0, where as intrinsic matrix maps it to [w,h] with principal point at the center [w/2, h/2]. So in the projection matrix, the principal point is set to 0, and the focal lengths are divided by width/height. You can also use the intrinsic matrix directly, or grab it from the projection matrix:

fx, fy = proj[0, 0], proj[1, 1]

cx, cy = proj[0, 2], proj[1, 2]

from objectron.

It also depends on which camera coordinate convention you want to use. In our case, the x-axis points to image bottom, the y-axis points to the image right, and the z-axis points away from the image. If you used a different convention, e.g. opencv's convention, then you will have to adjust the 3D points to your own camera coordinate before projecting them to 2D.

from objectron.

@jinlinyi Thanks for the example. I would be happy to merge this in the repo, if you create a pull request.

from objectron.

Related Issues (20)

- how to get the bounding box in the world-coordinate system HOT 1

- Video 3D Bounding Box Annotation tool for Objectron HOT 3

- Question about the category annotation HOT 4

- Questions about evaluation (reproducing the results) HOT 4

- Some bikes are labeled "motobike" HOT 2

- Faulty annotations in 2D HOT 1

- Loading poses into COLMAP HOT 3

- Extract segmentation mask by culling the depth mask with 3D bounding box in 3D

- Segmentation GT or Mask-RCNN

- 3d object detecton model for bottles HOT 1

- How to reproduce MobilePose v2 result? Which diagonal edge for normalized?

- Python Scripts for 3D Object Detection HOT 2

- why the rotation matrix is 3x3?

- How to convert scaling values into pixels width?

- How do i train objectron on custom objects? HOT 3

- Dataset Download

- No Kernel image available

- Why the annotated keypoints are sometimes very small or huge? HOT 1

- Where to download the point cloud information HOT 1

- The method of obtaining the depth of point cloud HOT 1

Recommend Projects

-

React

React

A declarative, efficient, and flexible JavaScript library for building user interfaces.

-

Vue.js

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

-

Typescript

Typescript

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

-

TensorFlow

An Open Source Machine Learning Framework for Everyone

-

Django

The Web framework for perfectionists with deadlines.

-

Laravel

A PHP framework for web artisans

-

D3

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

-

Recommend Topics

-

javascript

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

-

web

Some thing interesting about web. New door for the world.

-

server

A server is a program made to process requests and deliver data to clients.

-

Machine learning

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

-

Visualization

Some thing interesting about visualization, use data art

-

Game

Some thing interesting about game, make everyone happy.

Recommend Org

-

Facebook

We are working to build community through open source technology. NB: members must have two-factor auth.

-

Microsoft

Open source projects and samples from Microsoft.

-

Google

Google ❤️ Open Source for everyone.

-

Alibaba

Alibaba Open Source for everyone

-

D3

Data-Driven Documents codes.

-

Tencent

China tencent open source team.

from objectron.